The 73rd and Last Day of the Season of The Aftermath. Eye Day is celebrated by playing Discordian Games.

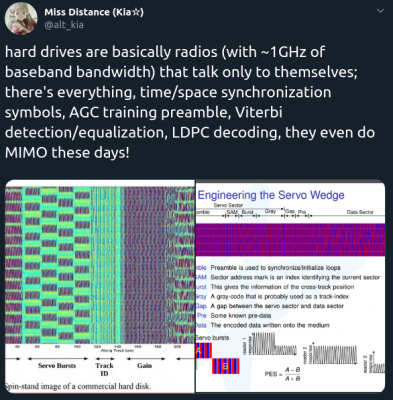

time/space synchronization symbols, AGC training preamble, Viterbi detection/equalization, LDPC decoding and MIMO

Of course this post is talking about hard disks. The ones with spinning disks and read/write heads flying very close to the spinning disks surface.

There are several links to the source papers and works discussing the findings – take look into this nice rabbit hole:

RaspberryPis to Access Points!

Current generations of RaspberryPi single board computers (from 3 up) already got WiFi on-board. Which is great and can be used, in combination with the internal ethernet or even additional network interfaces (USB) to create a nice wired/wireless router. This is what this RaspAP project is about:

This project was inspired by a blog post by SirLagz about using a web page rather than ssh to configure wifi and hostapd settings on the Raspberry Pi. I began by prettifying the UI by wrapping it in SB Admin 2, a Bootstrap based admin theme. Since then, the project has evolved to include greater control over many aspects of a networked RPi, better security, authentication, a Quick Installer, support for themes and more. RaspAP has been featured on sites such as Instructables, Adafruit, Raspberry Pi Weekly and Awesome Raspberry Pi and implemented in countless projects.

This really is going to be very useful while on travels. I plan to replace my GL-INET router, which shows signs of age.

SimpleHUD is coming

DIRECTIVE 2009/24/EC – Article 6 – Decompilation

Article 6

Decompilation

- The authorisation of the rightholder shall not be required

where reproduction of the code and translation of its form

within the meaning of points (a) and (b) of Article 4(1) are

indispensable to obtain the information necessary to achieve

the interoperability of an independently created computer

program with other programs, provided that the following

conditions are met:

(a) those acts are performed by the licensee or by another

person having a right to use a copy of a program, or on

their behalf by a person authorised to do so;

(b) the information necessary to achieve interoperability has not

previously been readily available to the persons referred to

in point (a); and

(c) those acts are confined to the parts of the original program

which are necessary in order to achieve interoperability. - The provisions of paragraph 1 shall not permit the information obtained through its application:

(a) to be used for goals other than to achieve the interoperability of the independently created computer program;

(b) to be given to others, except when necessary for the interoperability of the independently created computer program;

or

(c) to be used for the development, production or marketing of

a computer program substantially similar in its expression,

or for any other act which infringes copyright. - In accordance with the provisions of the Berne

Convention for the protection of Literary and Artistic Works,

the provisions of this Article may not be interpreted in such a

way as to allow its application to be used in a manner which

unreasonably prejudices the rightholder’s legitimate interests or

conflicts with a normal exploitation of the computer program.

Celebrate Giftmas

A Whollyday also known as Santa Claus Day occurring on 67 Aftermath aka 25 December.

A holyday celebrating the capitalist gift giving season.

Do the money dance, waving money in fans whilst blasting Money by Pink Floyd. Identify a ridiculously worthless toy and encourage all small children to want one. Select greyfaces to receive a particular theme gift (like banana-flavored edible underwear) from thousands.

Giftmas

Tabemono – from a name to UX and UI…

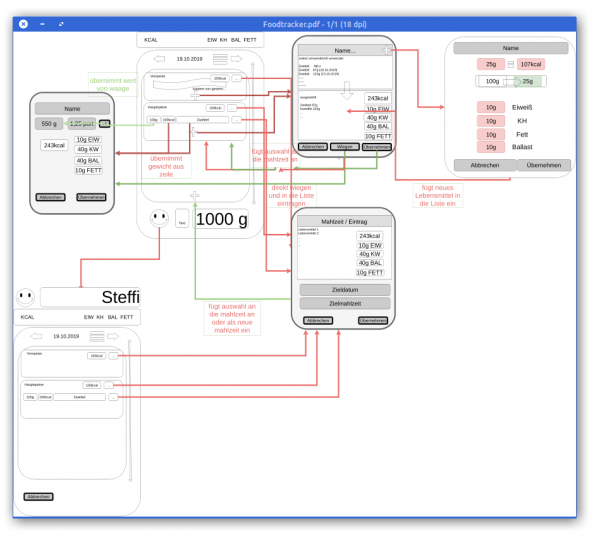

As you might know by now I am re-implementing MyFitnessPal functionality into my own application to be deeper integrated with kitchen hardware and my own personal use-cases rather than to be an add infested subscription based 3rd party applilcation.

So the development of this is ongoing, but I wanted to note down some progress and explanation.

Let’s start with explaining the name: Tabemono.

It does really mean something – and as some might have guessed – in japanese:

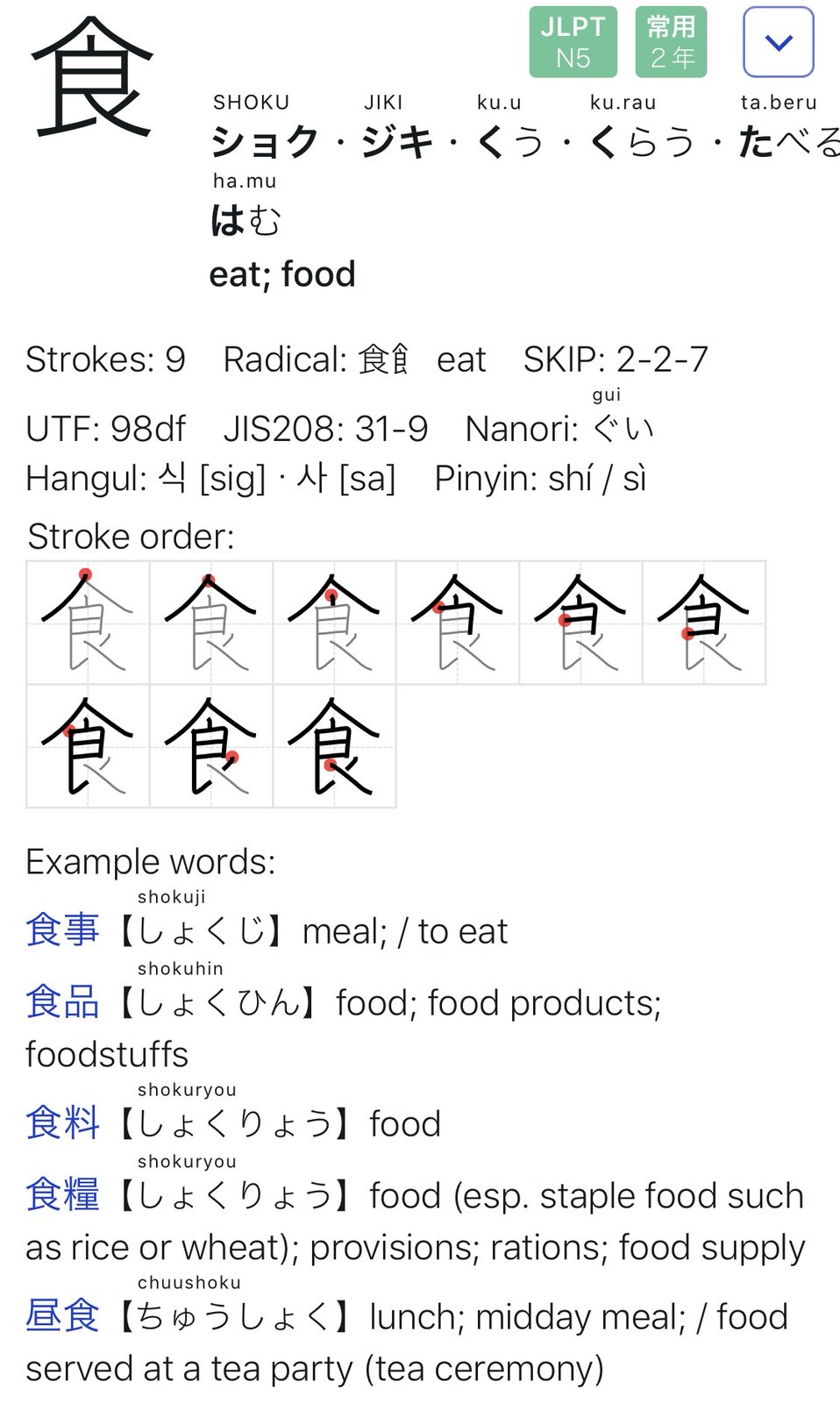

Tabemono – 食べ物

Taking just the first Kanji:

Implementing the UI from the UX has proven to be as challenging as expected.

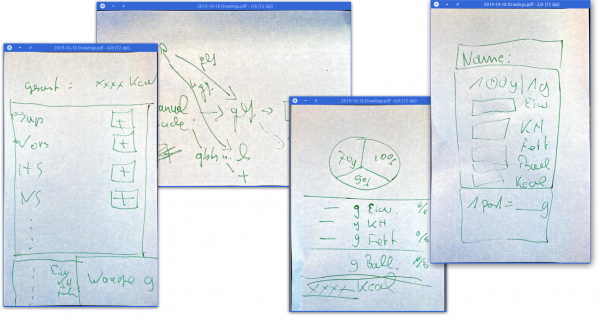

When we started to toss around the idea of re-implementing our food-tracking-needs we started with a simple scribble on post-it notes.

This quickly led to a digital version of this to better reflect what we wanted to happen during the different steps of use…

It wasn’t nice but it did act as an reminder of what we wanted to achieve.

The first thing we learned here was that this will all evolve while we are working on it.

So during a long international flight I’ve spent the better part of 11 hours on getting the above drawing into something resembling an iOS user interface mock-up. With the help of the (free for 1 private project) Adobe XD I clicked along and after 10 hours, this was the video I did of the click-dummy:

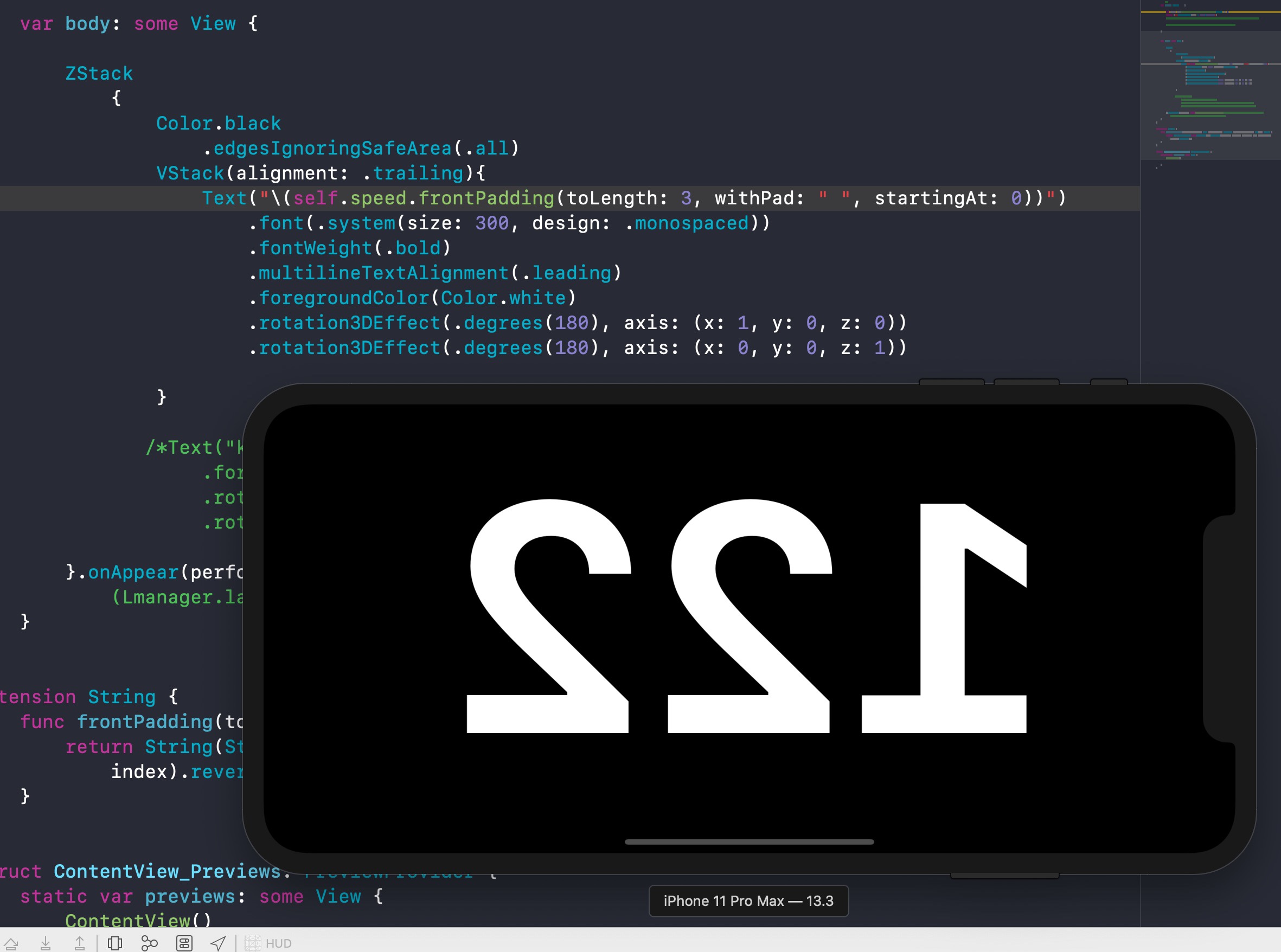

Since then I’ve spend maybe 1 more day and started the SwiftUI based implementation of the actual iOS application.

And this brought the first revelation: There are so many ideas that might make sense on paper and in a click-dummy. But only because those are just tools and not reality. It’s absolutely crucial to really DO the things rather than imagine them.

And so the second revelation came: If I had an advise to any product manager or developer out there: Go on and pick a project and try to go full-circle.

You ain’t full stack if you’re missing out on the understanding of the work and skill that your team members have and need.

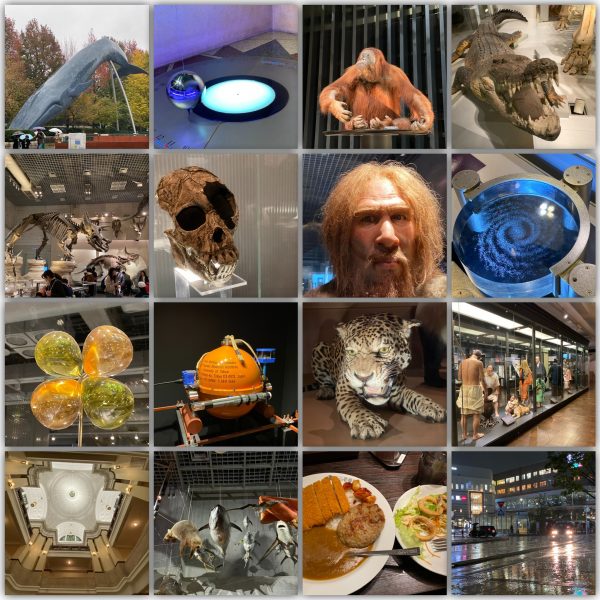

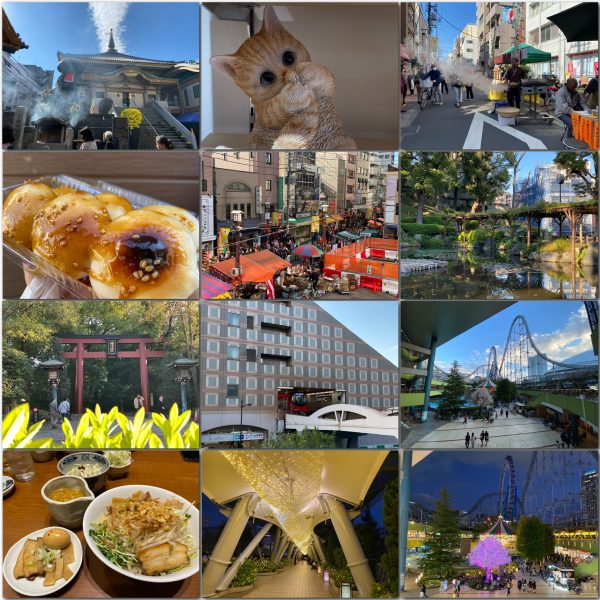

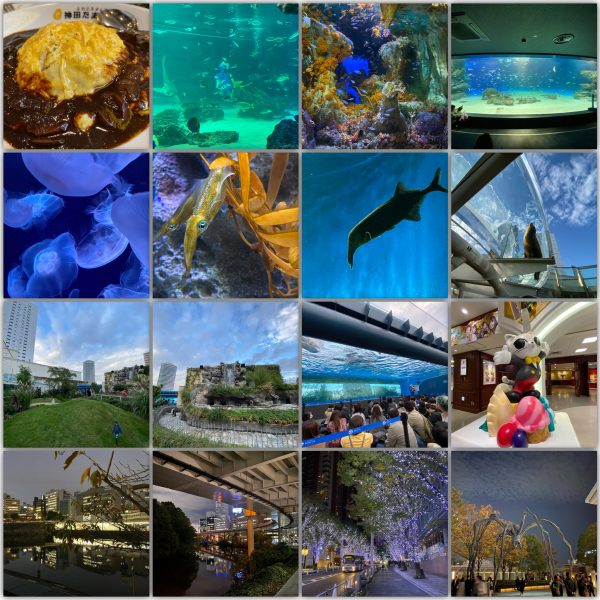

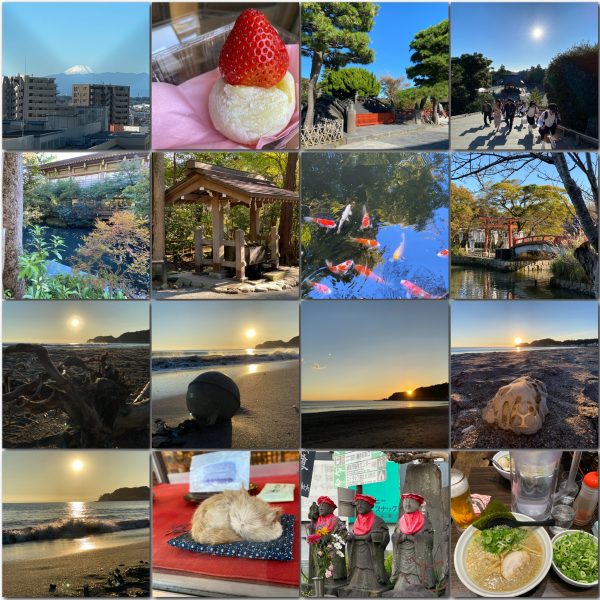

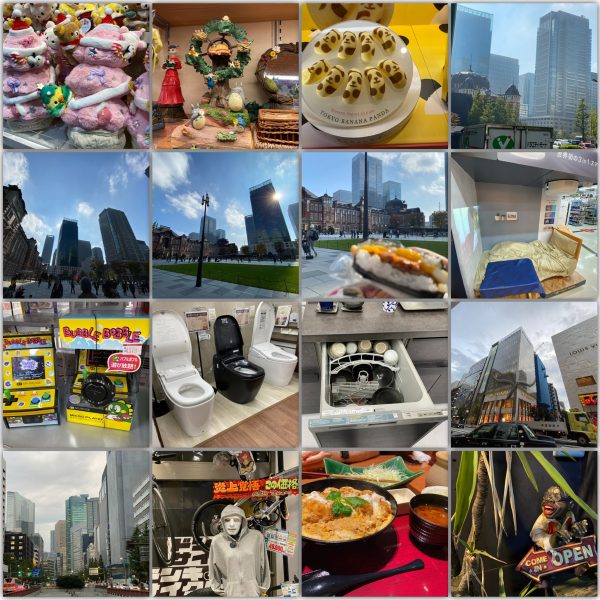

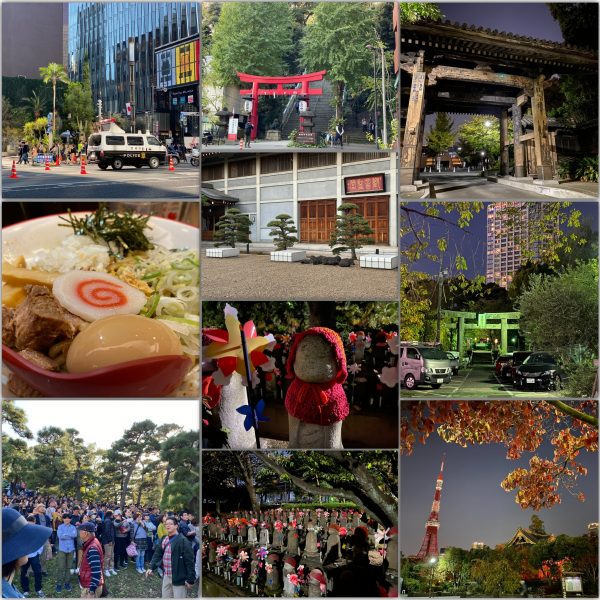

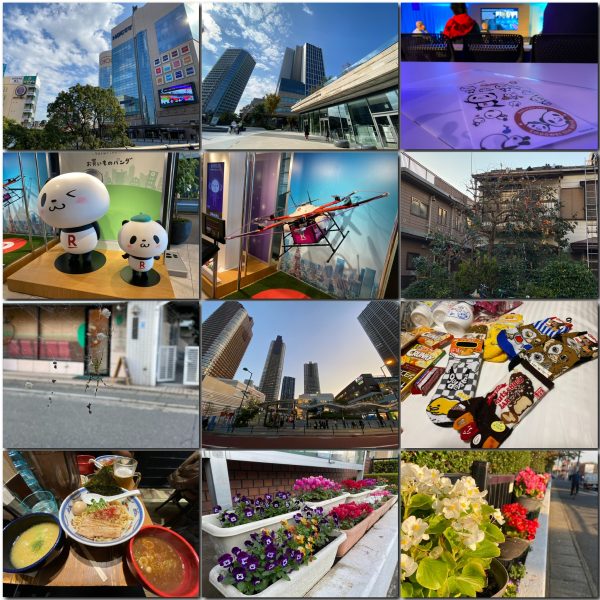

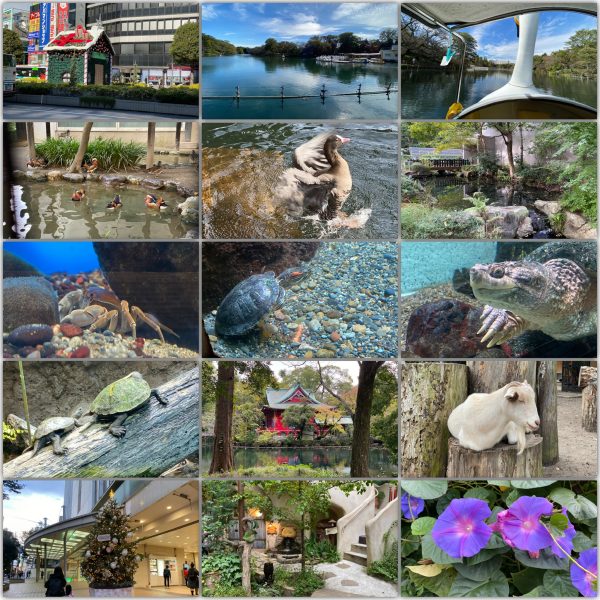

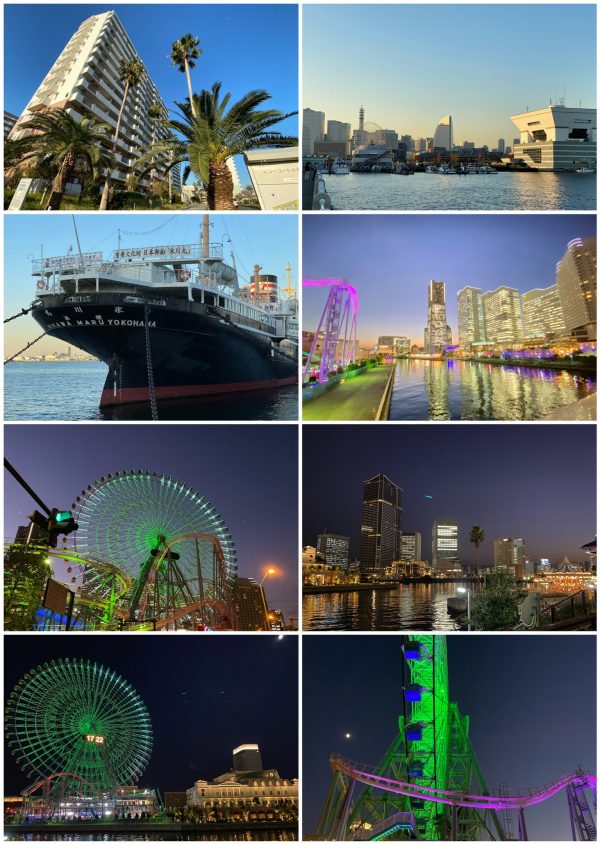

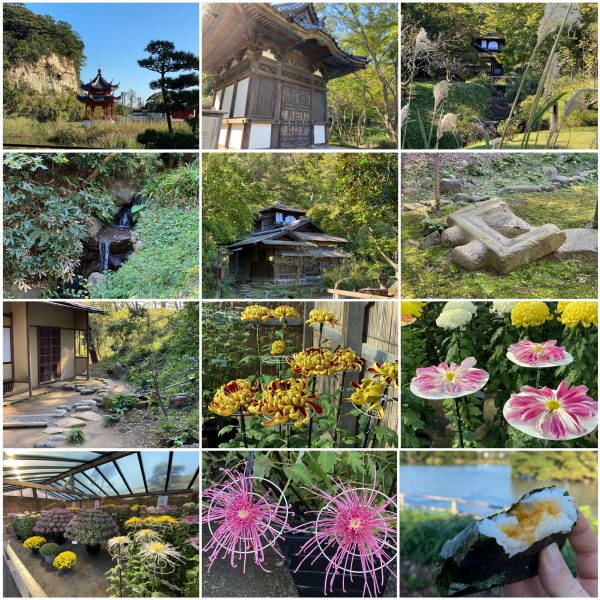

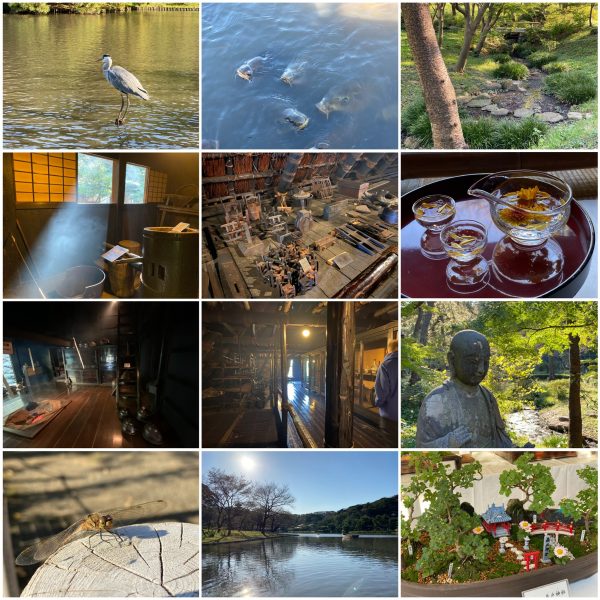

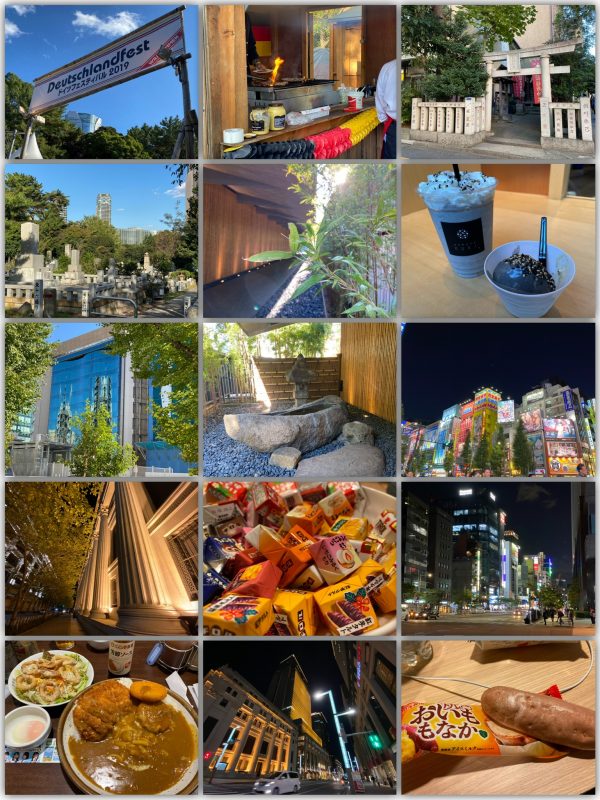

Japan November 2019: Day 23

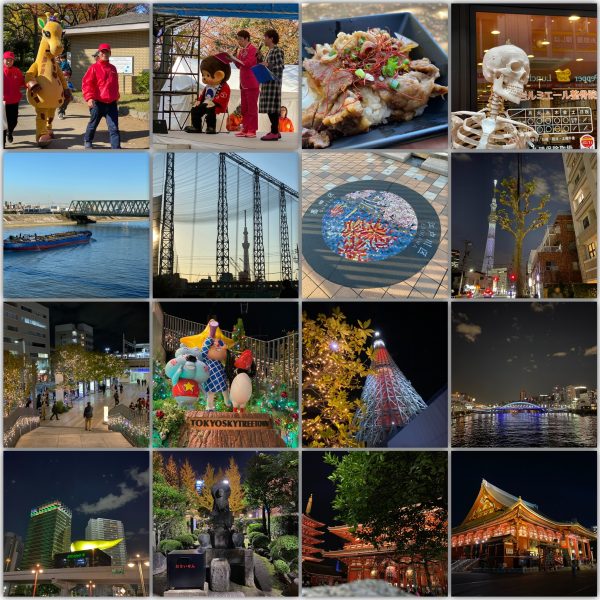

Japan November 2019: Day 22

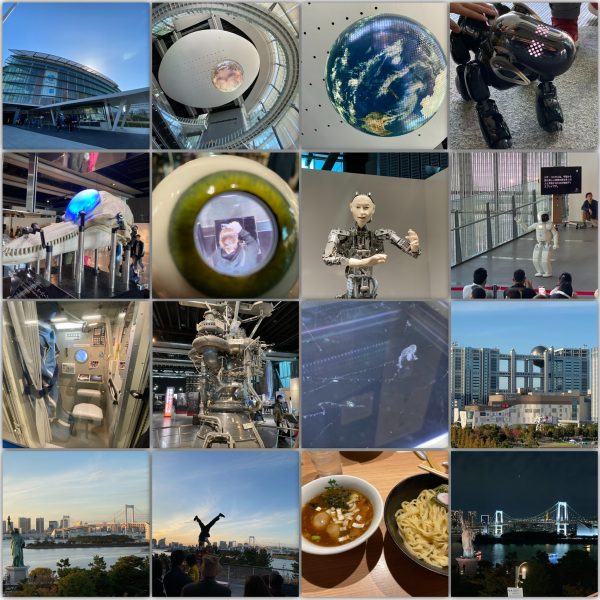

Japan November 2019: Day 21

HUD progress

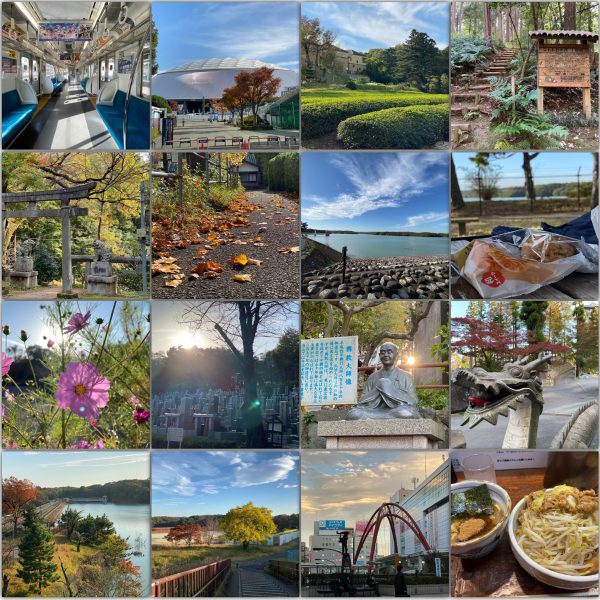

Japan November 2019: Day 20

Japan November 2019: Day 19

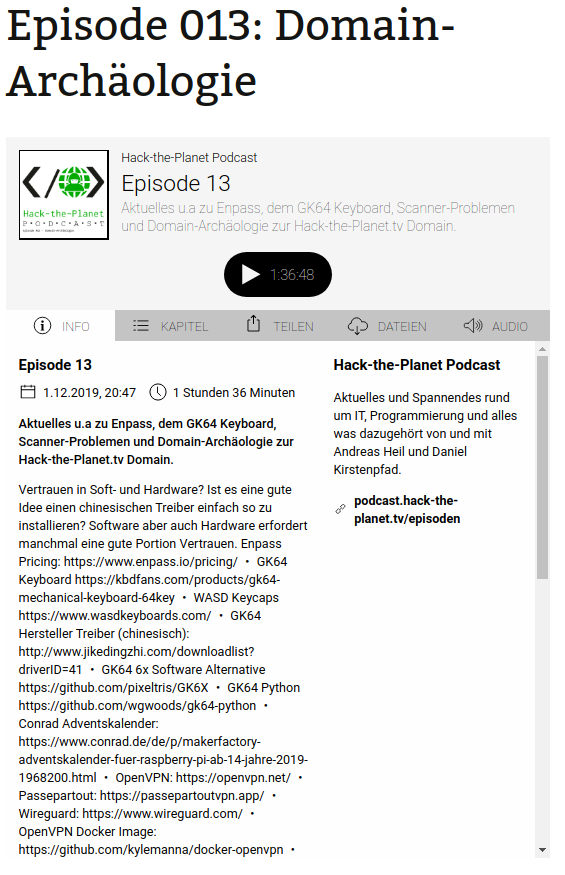

Hack-the-Planet Podcast: Episode 15

Japan November 2019: Day 18

Japan November 2019: Day 17

Japan November 2019: Day 16

Japan November 2019: Day 15

Japan November 2019: Day 14

Japan November 2019: Day 13

Japan November 2019: Day 12

Japan November 2019: Day 11

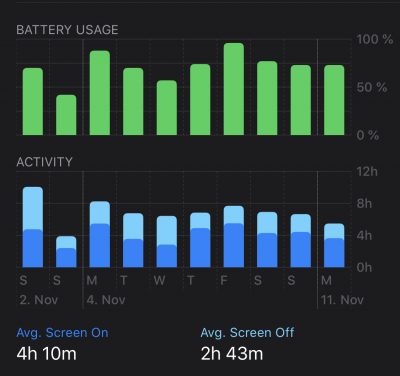

iPhone 11 Pro battery is…

…quite amazing.

I’ve upgraded just before the Japan travelling to the current iPhone generation. I was expecting some improved battery life but I did not dare to think I would get THIS.

I’ve taken the last 3 generations of iPhones on trips to Japan and they all went through the same exercises and quite comparable day schedules.

The amount of navigation, screen-time, taking pictures and just browsing the web / translating led to all 3 previous generations to be out-of-juice just around half-day.

Not this generation. Apparently something has changed. Not really in terms of screen time – screen on-time got better, but not as great as the overall usage time of the device with screen off.

In regards of how much power and runtime I am getting out of the device without having to reach for a batter pack or power supply is astonishing. I am using my Apple Watch for navigation clues so I am not really reaching out for the phone for that. But that means the phone is constantly used otherwise to make pictures, payments, translations….

I am comfortably leaving all battery packs and chargers at home when all the time before I was charging the phones at lunchtime for the first time. I usually had to charge 2 times a day to get through.

With this generations iPhone 11 Pro I am getting through the whole day and reach the hotel just before getting down to 20%.

I am still using it all throughout the day. But this is such a relief that I am confidently getting through a full day of fun. Thumbs up Apple!

approaching Chaos – celebrate Afflux

The 50th Day of the Season of The Aftermath. The Fluxday marking the approach of the Season of Chaos.

Chaos (season)