when AI dreams…

Video incoorporating image processing via python and BigGAN adversarial artificial neural network to breed new images. There are papers about “high fidelity natural image synthesis”.

Anthony Baldino – Like Watching Ghosts from his recently released album Twelve Twenty Two

Anthony Baldino

generative art: flowers

It started with this tweet about someone called Ayliean apparently drawing a plant based upon set rules and rolling a dice.

And because generative art in itself is fascinating I am frequently pulled into such things. Like this dungeon generator or these city maps or generated audio or face generators or buildings and patterns…

On the topic of flowers there’s another actual implementation of the above mentioned concept available:

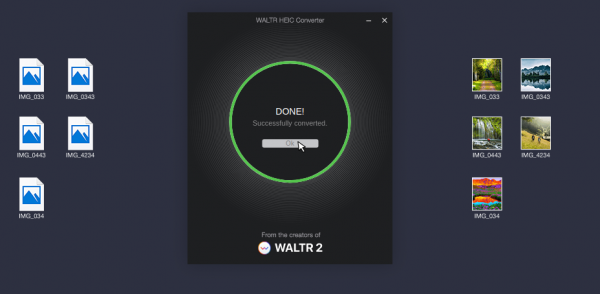

batch convert HEIF/HEIC pictures

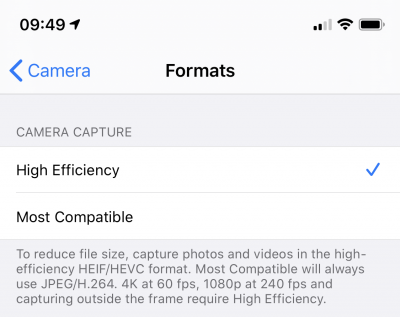

When you own a recent iOS device (iOS 11 and up) you’ve got the choice between “High Efficiency” or “Most Compatible” as the format all pictures are being stored by the camera app.

Most Compatible being the JPEG format that is widely used around the internet and other cameras out there and the “High Efficiency” coming from the introduction of a new file format and compression/reduction algorithms.

A pointer to more information about the format:

High Efficiency Image File Format (HEIF), also known as High Efficiency Image Coding (HEIC), is a file format for individual images and image sequences. It was developed by the Moving Picture Experts Group (MPEG) and is defined by MPEG-H Part 12 (ISO/IEC 23008-12). The MPEG group claims that twice as much information can be stored in a HEIF image as in a JPEG image of the same size, resulting in a better quality image. HEIF also supports animation, and is capable of storing more information than an animated GIF at a small fraction of the size.

Wikipedia: HEIF

As Apple is aware this new format is not compatible with any existing tool chain to work with pictures from cameras. So you would either need new, upgraded tools (the Apple-way) or you would need to convert your images to the “older” – not-so-efficient JPEG format.

To my surprise it’s not trivial to find a conversion tool. For Linux I’ve already wrote about such a tool here.

For macOS and Windows, look no further. Waltr2 is an app catering your conversion needs with a drag-and-drop interface.

It’s advertised as being free and offline. And it works a treat for me.

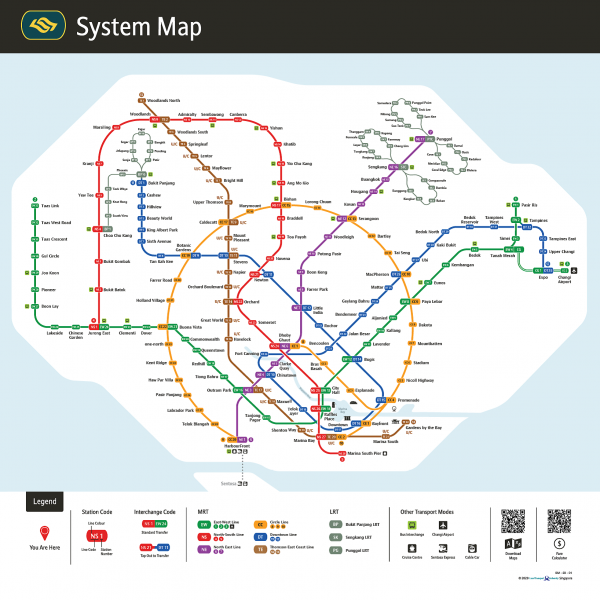

Drawing Transit Maps

Almost exactly 1 year ago I wrote about transit maps. And it seems to be a recurring topic. And rightfully so – it’s an interesting topic.

Along the presentation of a redesigned Singapore transit map, there’s more content to gather on the “Transit Mapping Symposium” website.

The “Transit Mapping Symposium” will take place in Seoul / South-Korea on 20/21st of April 2020 with researchers and designers meeting up.

The Transit Mapping Symposium is a yearly international gathering of transport networks professionals, a unique opportunity to share achievements, challenges and vision.

Our participants and speakers include experts from all fields of the industry:

– Mapmakers

– Network Operators

– Transport Authorities

– Digital Platforms

– Designers

generative art: 36c3 generator

As I am a bit late to the party on this: There’s, just like last year, a nice generative art tool provided by bleeptrack to generate your very own 36c3 themed headlines/logos:

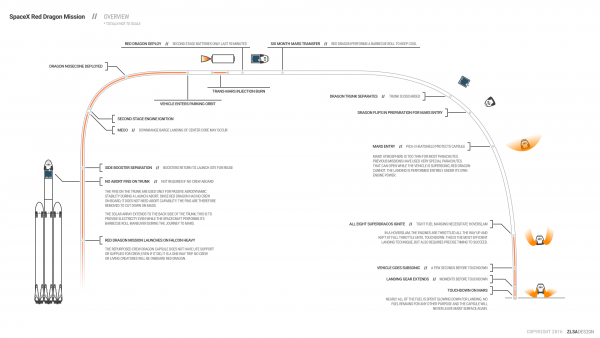

Spaceflight Infographics

A great source of SpaceX related infographics is this page here.

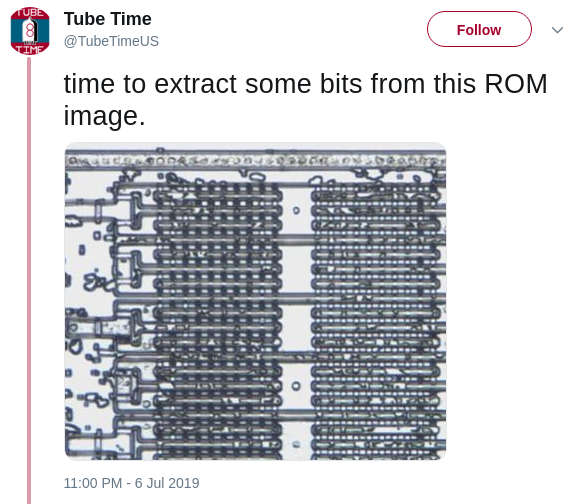

extract bits from ROM

Tube Time is apparently on the job to extract actual data from ROM images extracted out of chips which seemingly are those of a Creative Labs Sound Blaster 1.0 card.

In addition to this excavation work there’s a growing documentation of the inner workings of mentioned Sound Blaster card.

PixelFed – Federated Image Sharing

In search of alternatives to the traditional centralized hosted social networks a lot of smart people have started to put time and thought into what is called “the-federation”.

The Federation refers to a global social network composed of nodes that talk to each other. Each of them is an installation of software which supports one of the federated social web protocols.

What is The Federation?

You may already have heard about projects like Mastodon, Diaspora*, Matrix (Synapse) and others.

The PixelFed project seems to gain some traction as apparently the first documentation and sources are made available.

PixelFed is a federated social image sharing platform, similar to instagram. Federation is done using the ActivityPub protocol, which is used by Mastodon, PeerTube, Pleroma, and more. Through ActivityPub PixelFed can share and interact with these platforms, as well as other instances of PixelFed.

the-federation

I am posting this here as to my personal logbook.

Given that there’s already a Dockerfile I will give it a try as soon as possible.

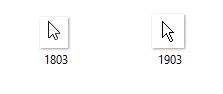

I don’t like the long-tail Windows 10 default cursor

The first device in my household recently has updated itself to the newest Windows 10 1903 build.

On the very first moment of the login screen appearing and logging in I could tell that I hate one specific change that has made it into this latest update.

And it’s the default mouse cursor.

Back in the Pre-Windows Vista days, when I used to work for Microsoft, I was using the latest internal build of Windows and just around the first RTM (release-to-manufacture) build they touched up on the final designs.

I remember vividly when the mouse cursor had changed from the one we new and used since Windows 3 to a shorter tailed more “high-def” looking one.

Since then there were a couple of changes on the cursor but the general design was kept.

Now apparently with the latest Windows 10 update from 1803 to 1903 I got a new – old default mouse cursor.

right: booh!

By reflex I changed it back to the one I love and stored safely in a backup. I cannot stand the long tail and the weird pixel-ness of the cursor. It just looks kinda weird to my eyes.

Which one do you like better?

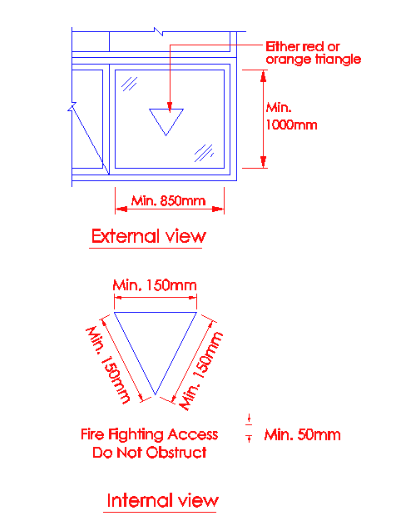

a red triangle on the window

When you walk around in Tokyo you will find that many buildings have red-triangle markings on some of the windows / panels on the outside.

I noticed them as well but I could not think of an explanation. Digging for information brought up this:

Panels to fire access openings shall be indicated with either a red or orange triangle of equal sides (minimum 150mm on each side), which can be upright or inverted, on the external side of the wall and with the wordings “Firefighting Access – Do Not Obstruct” of at least 25mm height on the internal side.

Singapore Firefighting Guide 2018

The red triangles on the buildings/hotel windows in Japan are the rescue paths to be used in case of fire. All fire fighters know the meaning of this red triangle on the windows. Red in color makes it prominent, to be located easily by the fire fighters in case of a fire incident. During a fire incident, windows are generally broken to allow for smoke and other gases to come out of the building.

Triangles in Japan

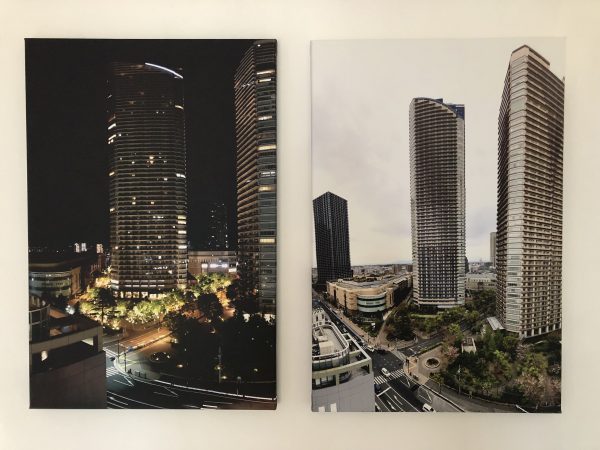

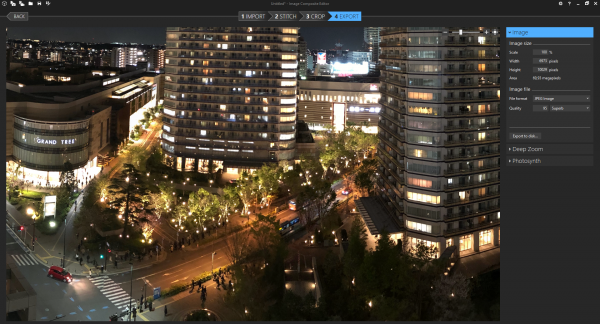

グランツリー武蔵小杉 and Park City Forest Towers on canvas

I’ve blogged about those pictures taken day- and night-time before. I’ve also blogged about how they where produced.

With a picture spot freed at one of the walls in our house we decided to print the day and night pictures on canvas and have them side-by-side. And it looks great, I think.

Bitmap & tilemap generation with the help of ideas from quantum mechanics

You can get a grasp at the beautiful side of science with visualizations and algorithms that output visual results.

This is the example of producing lots and lots of complex data (houses!) from a small set of input data. It is widely used in game development but also can be helpful to generate parameterized test and simulation environments for machine learning.

So before sending you over to the more detailed explanation the visual example:

This is a lot of different house images. Those are generated using a program called WaveFunctionCollapse:

WFC initializes output bitmap in a completely unobserved state, where each pixel value is in superposition of colors of the input bitmap (so if the input was black & white then the unobserved states are shown in different shades of grey). The coefficients in these superpositions are real numbers, not complex numbers, so it doesn’t do the actual quantum mechanics, but it was inspired by QM. Then the program goes into the observation-propagation cycle:

On each observation step an NxN region is chosen among the unobserved which has the lowest Shannon entropy. This region’s state then collapses into a definite state according to its coefficients and the distribution of NxN patterns in the input.

On each propagation step new information gained from the collapse on the previous step propagates through the output.

On each step the overall entropy decreases and in the end we have a completely observed state, the wave function has collapsed.

It may happen that during propagation all the coefficients for a certain pixel become zero. That means that the algorithm has run into a contradiction and can not continue. The problem of determining whether a certain bitmap allows other nontrivial bitmaps satisfying condition (C1) is NP-hard, so it’s impossible to create a fast solution that always finishes. In practice, however, the algorithm runs into contradictions surprisingly rarely.

Wave Function Collapse algorithm has been implemented in C++, Python, Kotlin, Rust, Julia, Go, Haxe, JavaScript and adapted to Unity. You can download official executables from itch.io or run it in the browser. WFC generates levels in Bad North, Caves of Qud, several smaller games and many prototypes. It led to new research. For more related work, explanations, interactive demos, guides, tutorials and examples see the ports, forks and spinoffs section.

Hain, Bamberg

Smile

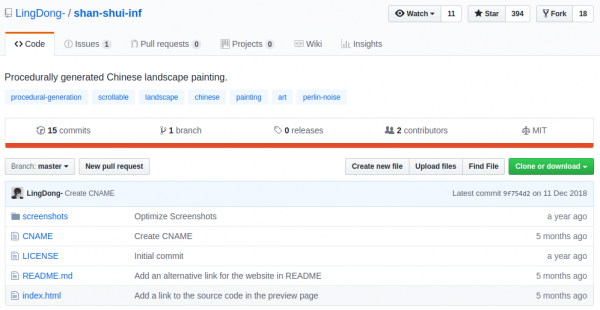

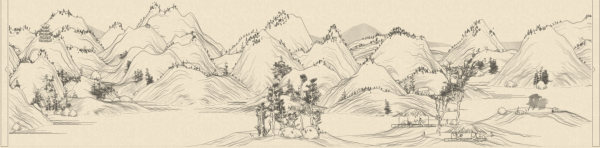

procedural generated traditional Chinese landscape scrolls

{Shan, Shui}* is inspired by traditional Chinese landscape scrolls (such as this and this) and uses noises and mathematical functions to model the mountains and trees from scratch. It is written entirely in javascript and outputs Scalable Vector Graphics (SVG) format.

https://github.com/LingDong-/shan-shui-inf

This is quite impressive and I am thinking about pushing that into the header of this blog :-) It’s just too nice looking to pass on.

let AI convert videos to comic strips for you

Artificial Intelligence is used more and more to achieve tasks only humans could do before. Especially in the areas that need a certain technique to be mastered AI goes above and beyond what humans would be able to do.

In this case a team has implemented something that takes video inputs and generates a comic strip from this input. Imagine it to look like this:

In this paper, we propose a solution to transform a video into a comics. We approach this task using a

Paper

neural style algorithm based on Generative Adversarial Networks (GANs).

They even made a nice website you can try it yourself with any YouTube Video you want:

Hyper-realistic train on canvas

I mentioned this hyper realistic painting of a japanese train previously. And now this painting has been printed on canvas and is on our wall:

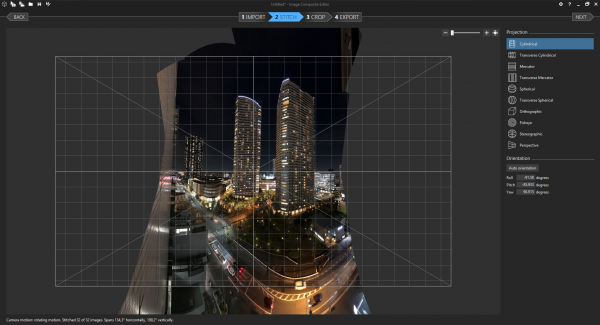

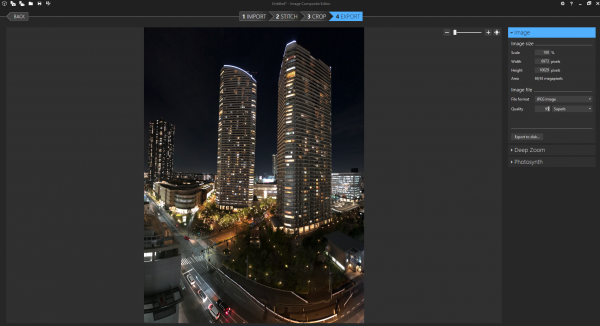

Panoramic Images free (-hand)

I really like taking panoramic images whenever I can. They convey a much better impression of the situation I’ve experienced then a single image. At least for me. And because of the way they are made – stitched together from multiple images – they are most of the time very big. A lot of pixels to zoom into.

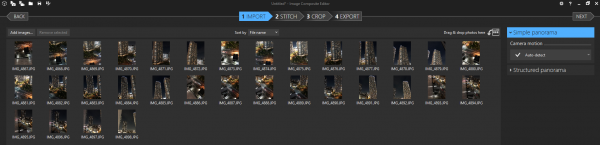

The process to take such a panoramic image is very straight forward:

- Take overlapping pictures of the scenery in multiple layers if possible. If necessary freehand.

- Make sure the pictures overlap enough but there’s not a lof of questionable movement in them (like a the same person appearing in multiple pictures…)

- Copy them to a PC.

- Run the free Microsoft Image Composite Editor.

- Pre-/Post process for color.

The tools used are all free. So my recommendation is the Microsoft Image Composite Editor. Which in itself was a Microsoft Research project.

Image Composite Editor (ICE) is an advanced panoramic image stitcher created by the Microsoft Research Computational Photography Group. Given a set of overlapping photographs of a scene shot from a single camera location, the app creates high-resolution panoramas that seamlessly combine original images. ICE can also create panoramas from a panning video, including stop-motion action overlaid on the background. Finished panoramas can be saved in a wide variety of image formats,

Image Composite Editor

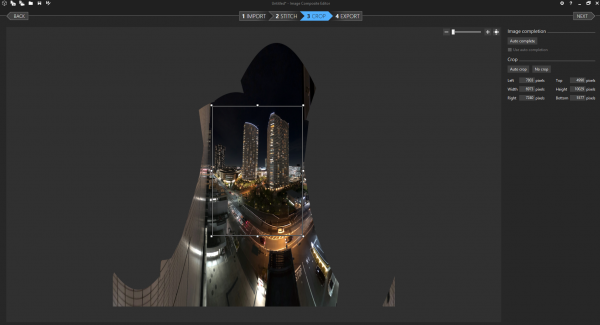

Here’s how the stitching process of the Musashi-kosugi Park City towers night image looked like:

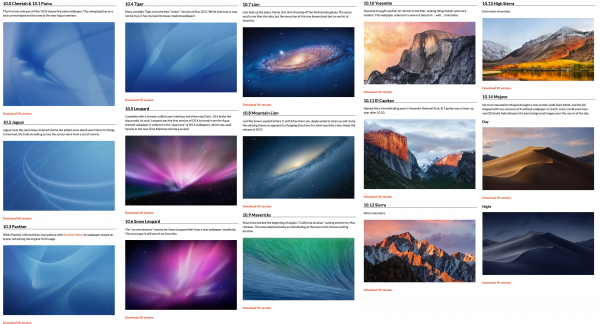

all macOS wallpapers in 5k

Every major version of

https://512pixels.net/projects/default-mac-wallpapers-in-5k/Mac OS XmacOS has come with a new default wallpaper. As you can see, I have collected them all here.

While great in their day, the early wallpapers are now quite small in the world of 5K displays.

Major props to the world-class designer who does all the art of Relay FM, the mysterious @forgottentowel, for upscaling some of these for modern screens.

“kachung” + shutter sound

When you take a picture with an iPhone these days it does generate haptic feedback – a “kachung” you can feel. And a shutter sound.

Thankfully the shutter sound can be disabled in many countries. I know it can’t be disabled on iPhones sold in Japan. Which kept me from buying mine in Tokyo. Even when you switch the regions to Europe / Germany it’ll still produce the shutter sound.

Anyway: With my iPhone, which was purchased in Germany, I can disable the shutter sound. But it won’t disable the haptic “kachung”.

It’s interesting that Apple added this vibration to the activity of taking a picture. Other camera manufactures go out of their way to decouple as much vibration as possible even to the extend that they will open the shutter and mirror in their DSLRs before actually making the picture – just so that the vibration of the mirror movement and shutter isn’t inducing vibrations to the act of taking the picture.

With mirror less cameras that vibration is gone. But now introduced back again?

Am I the only one finding this strange?

an afternoon in the park

Living in an UNESCO world heritage city has a lot of advantages. One of them is the amount of nice parks and areas to enjoy the spring sun.

We’ve spent the afternoon walking around and taking some pictures.

sakura season forecast

I am visiting Japan for almost 7 years now but I’ve never actually been there when the famous cherry blossom – or sakura – was in full force.

As every year there’s a forecast map for this years season and it gets updated frequently:

- First Forecast Issued January 24th

- Second Forecast Issued February 7th <- today

- Third Forecast Issued February 21st

- Fourth Forecast Issued February 28th

Instagram – until now

I’ve had already added a couple of pictures to my instagram account – mainly while abroad. Pictures that I consider nice enough to be shared.

Of course my latest switch away from those public silos will include having those pictures posted mainly on this website and maybe as a side-note on those services as well.

To begin with I will have a separate page created that will host those pictures I consider nice enough to be shared.

[ngg src=”galleries” ids=”5″ display=”basic_thumbnail”]