In part 1 I wrote a bit about this great game and shared some screenshots. By now I’ve finished the story and almost all side-quests and I still see it as the best game since years.

Anyway, here are more pictures taken in-game (sometimes stitched):

For the first time in the last 10ish years I am back playing a game that really impresses me. The story, the world and the technology of Cyberpunk 2077 really is a step forward.

It’s a first in many aspects for me. I do not own a PC capable enough of playing Cyberpunk 2077 at any quality level. Usually I am playing games on consoles like the Playstation. But for this one I have selected to play on the PC platform. But how?

I am using game streaming. The game is rendered in a datacenter on a PC and graphics card I am renting for the purpose of playing the game. And it simply works great!

So I am playing a next-generation open-world game with technical break-throughs like Raytracing used to produce really great graphics streamed over the internet to my big-screen TV and my keyboard+mouse forwarded to that datacenter without (for me) noticeable lag or quality issues.

The only downside I can see so far is that sooo many people like to play it this way that there are not enough machines (gaming-rigs) available to all the players that want – so there’s a queue in the evening.

But I am doing what I am always doing when I play games. I take screenshots. And if the graphics are great I am even trying to make panoramic views of the in-game graphics. Remember my GTA V and BioShock Infinite pictures?

So here is the first batch of pictures – some stitched together using 16 and more single screenshots. Look at the detail! Again – there are in-game screenshots. Click on them to make them bigger – and right-click open the source to really zoom into them.

Curtesy of Sam Zeloof I came around the fact that I’ve got a good part of a FSTM in a cupboard here.

Apparently my choice of purchasing the HD-DVD drive for the Xbox 360 will ultimately pay off!! As we all know Bluray won that format war back in the days.

But now it seems that this below would be useable for something:

Over the life of nuclear fuel, inhomogeneous structures develop, negatively impacting thermal properties. New fuels are under development, but require more accurate knowledge of how the properties change to model performance and determine safe operational conditions.

Measurement systems capable of small–scale, pointwise thermal property measurements and low cost are necessary to measure these properties and integrate into hot cells where electronics are likely to fail during fuel investigation. This project develops a cheaper, smaller, and easily replaceable Fluorescent Scanning Thermal Microscope (FSTM) using the blue laser and focusing circuitry from an Xbox HD-DVD player.

The Design, Construction, and Thermal Diffusivity Measurements of the Fluorescent Scanning Thermal Microscope (FSTM)

As mentioned, Sam Zeloof shows off the actual chip in more detail:

Tocotronic is one of the bands I listened to during my teens/twenties. That dates me, that dates the band.

It’s very german. You’ll find their music on most streaming platforms. I recommend starting with the albums “wir kommen um uns zu beschweren” and “es ist egal aber”.

Anyhow. Now one member of the band starts a podcast!

Jan Müller ist seit über 25 Jahren Mitglied der Band Tocotronic. Er ist mit dem Format des Interviews bestens vertraut und kann sich als Fragender gut in die Perspektive seiner Gäste hineinversetzen. Die persönliche Auswahl seiner Gesprächspartner*innen bildet die Grundlage für authentische Gespräche, die stets von Interesse und Respekt geprägt sind. Mit “Reflektor” startet Jan seinen ersten Podcast.

https://viertausendhertz.de/reflektor

The Podlove project once again leads the way to improve the experience and the way we interact with knowledge and thoughts. With the most current announcement and introduction of transcription-support for podcasts. Click on it right now and try it yourself.

Fulltext-search. Listening to podcasts by reading them. This-is-amazing!

Transcripts are coming

from the Podlove Publisher 2.8 announcement

Transcripts are an incredibly desirable thing to have for podcasts: they allow searching for specific parts, increase searchability by search sites when presented properly and they increase the accessibility of audio content significantly too.

However, transcripts have been considerably difficult to be created and used. Manually created transcripts are costly in terms of time and money and even if you spend the money there has been a lack of technical standards for storing and integrating transcripts into websites in a defined way.

This is now slowly changing: more and more automated speech-to-text systems are becoming available at reasonable costs and they are creating ever better transcripts with more and more languages being supported.

Still, automatic transcripts trail manually created transcripts in terms of accuracy, punctuation and so on but they are increasingly useful when they are primarily used for improving search results or helping you with your internal research when trying to find content in your older episodes.

New services are also coming up to deal with these problems by allowing users to quickly build on automatic transcripts and improve them manually in an assisted fashion. We will soon see a landscape of tools and services that will make creating transcripts easy and cheap enough for more and more podcasters so it’s time to come up with a good integration.

Last but not least, the WebVTT file format has become a de-facto common denominator for passing transcripts along, supporting time codes, speaker identification and a rudimentary set of meta data. While not perfect it’s enough to get a transcript infrastructure up and running and Podlove is leading the way.

Who would not want to know a place where colors are central. An encyclopedia of color names and many associations to colors.

Like company logos, HTML color codes, named colors, emojis, flags, schools, sport teams, …

In less than 10 days the season of chaos will end and discord will take over.

To be prepared and to not miss any important days – as some sort of public service announcement – I hereby link you to the discordian calendars adjusted for the current year 3185 (2019).

A holyday not found on any calendar. A calendar not found on any planet. A planet not found in any universe. A universe not found in any imagination. An imagination not found.

cliche internet alias

One thing I cannot do without linking to external sources or having control over the content storage is to have videos here on the pages.

There are a couple of options to achieve this and I am evaluating some of them right now. The goal is very clear:

So let’s see some options tried out:

We are looking at our screens more and more time of the day and most of that time we are reading or writing text. Text needs to look pretty for our eyes not to get sore – apart from the obvious “being able to tell what letter that is” there is a big portion of personal taste and preference when it comes to the choice of the font.

Most of the texts I am writing benefit from monospaced fonts.

This blog celebrates monospaced fonts for programming.

programmingfonts.org/about

So many fonts have popped up in recent years.

Of course there’s a nice page available that previews the fonts right in your browser:

Earlier this week I stumbled upon a hacker-themed sticker that raised attention of some as it resembles a certain religion specific saying.

But in this case, it actually says “alle gut hackbar.” which being German translates to “everyone is easily hackable”.

The Internet of Things might as well become your Internet of Money. Some feel the future to be with blockchain related things like BitCoin or Ethereum and they might be right. So long there’s also this huge field of personal finances that impacts our lives allday everyday.

And if you get to think about it money has a lot of touch points throughout all situations of our lifes and so it also impacts the smart home.

Lots of sources of information can be accessed today and can help to stay on top of the things going on as well as make concious decisions and plans for the future. To a large extend the information is even available in realtime.

– cost tracking and reporting

– alerting and goal setting

– consumption and resource management

– like fuel oil (get alerted on price changes, …)

– stock monitoring alerting

– and more advanced even automated trading

– bank account monitoring, in- and outbound transactions

– expectations and planning

– budgetting

After all this is about getting away from lock-in applications and freeing your personal financial data and have a all-over dashboard of transactions, plans and status.

So you’re listening to this audio book for a while now, it’s quite long but really thrilling. In fact it’s too long for you to go through in one sitting. So you pause it and eventually listen to it on multiple devices.

We’ve got SONOS in our house and we’re using it extensively. Nice thing, all that connected goodness. It’s just short of some smart features. Like remembering where you paused and resuming a long audio book at the exact position you stopped the last time. Everytime you would play a different title it would reset the play-position and not remember where you where.

With some simple steps the house will know the state of all players it has. Not only SONOS but maybe also your VCR or Mediacenter (later use-case coming up!).

Putting together the strings and you get this:

Whenever there’s a title being played longer than 10 minutes and it’s paused or stopped the smart house will remember who, where and what has been played and the position you’ve been at.

Whenever that person then is resuming playback the house will know where to seek to. It’ll resume playback, on any system that is supported at that exact position.

Makes listening to these things just so much easier.

Bonus points for a mobile app that does the same thing but just on your phone. Park the car, go into the house, audiobook will continue playback, just now in the house instead of the car. The data is there, why not make use of it?

p.s.: big part of that I’ve opensourced years ago: https://github.com/bietiekay/sonos-auto-bookmarker

I am using this for more several years now. Even though all my workflow happens on Macintosh computers these days I’ve kept this tool in my toolbox: Microsoft Image Composite Editor.

Now after along while with the 1.0 version Microsoft Research decided to release a new version of the free tool with even more features and a new streamlined user interface. This is so much better than before.

[youtube]https://www.youtube.com/watch?v=zhdXLH2GYPA[/youtube]

“Image Composite Editor (ICE) is an advanced panoramic image stitcher created by the Microsoft Research Computational Photography Group. Given a set of overlapping photographs of a scene shot from a single camera location, the app creates a high-resolution panorama that seamlessly combines the original images. ICE can also create a panorama from a panning video, including stop-motion action overlaid on the background. Finished panoramas can be shared with friends and viewed in 3D by uploading them to the Photosynth web site. Panoramas can also be saved in a wide variety of image formats, including JPEG, TIFF, and Photoshop’s PSD/PSB format, as well as the multiresolution tiled format used by HD View and Deep Zoom.”

Source 1: http://research.microsoft.com/en-us/um/redmond/projects/ice/

I was on a business trip the other day and the office space of that company was very very nice. So nice that they had all sorts of automation going on to help the people.

For example when you would run into a room where there’s no light the system would light up the room for you when it senses your presence. Very nice!

There was some lag between me entering the room, being detected and the light powering up. So while running into a dark room, knowing I would be detected and soon there would be light, I shouted “Computer! Light!” while running in.

That StarTrek reference brought an old idea back that it would be so nice to be able to control things through omnipresent speech recognition.

I am aware that there’s Siri, Cortana, Google Now. But those things are creepy because they involve external companies. If there are things listening to me all day every day, I want them to be within the premise of the house. I want to know exactly down to the data flow what is going on and sent where. I do not want to have this stuff leave the house at any times. Apart from that those services are working okayish but well…

Let alone the hardware. Usually the existing assistants are carried around in smart phones and such. Very nice if you want to touch things prior to talking to them. I don’t want to. And no, “Hey Siri!” or “OK Google” is not really what I mean. Those things are not sophisticated enough yet. I was using “Hey Siri!” for less than 24 hours. Because in the first night it seemed to have picked up something going on while I was sleeping which made it go full volume “How can I help!” on me. Yes, there’s no “don’t listen when I am sleeping” thing. Oh it does not know when I am sleeping. Well, you see: Why not?

Anyway. What I wish there was:

And all that should be working on a basic level without internet access. Just like that.

So? Any volunteers?

Like every year the Chaos Communication Congress gathered thousands of people in one place between the Christmas-Holidays and NewYears.

Since I was out-of-order this year to attend I’ve opted for the Attending-by-Stream option. All Lectures are live-streamed by the awesome CCC Video Operations Center (C3VOC) and made available as recordings afterwards.

Since the choice of topics is enormous here are some I can recommend:

Source 1: http://events.ccc.de/congress/2014/wiki/Static:Main_Page

Source 2: http://en.wikipedia.org/wiki/Chaos_Communication_Congress

Source 3: http://c3voc.de/

Source 4: http://media.ccc.de/browse/congress/2014/

As racing cars with petrol engines gets more and more uninteresting for the masses and even Formula 1 faces competition by Formula E.

Now having humans inside cars racing a wide track is one thing, but using relatively cheap but extremely high-tech multi-copters with first-person-view cameras mounted on them and flown by crazy guys sitting next to the “racing track” is the next big thing!

[youtube]https://www.youtube.com/watch?v=6zDDsX5xYcA[/youtube]

As you can see it basically looks like the Endor-scenes from Star Wars. In fact it does look so interesting that I am tempted to try it myself…

“OpenFlights is a tool that lets you map your flights around the world, search and filter them in all sorts of interesting ways, calculate statistics automatically, and share your flights and trips with friends and the entire world (if you wish). It’s also the name of the open-source project to build the tool.”

Source: http://openflights.org/

“Ever notice how people texting at night have that eerie blue glow?

Or wake up ready to write down the Next Great Idea, and get blinded by your computer screen?

During the day, computer screens look good—they’re designed to look like the sun. But, at 9PM, 10PM, or 3AM, you probably shouldn’t be looking at the sun.

f.lux fixes this: it makes the color of your computer’s display adapt to the time of day, warm at night and like sunlight during the day.

It’s even possible that you’re staying up too late because of your computer. You could use f.lux because it makes you sleep better, or you could just use it just because it makes your computer look better.”

Source: https://justgetflux.com/

The next time you stumble across a PDF file with security and not allowing you to print or copy/paste.

Do this:

qpdf –decrypt

“QPDF is a command-line program that does structural, content-preserving transformations on PDF files. It could have been called something like pdf-to-pdf. It also provides many useful capabilities to developers of PDF-producing software or for people who just want to look at the innards of a PDF file to learn more about how they work.

QPDF is capable of creating linearized (also known as web-optimized) files and encrypted files. It is also capable of converting PDF files with object streams (also known as compressed objects) to files with no compressed objects or to generate object streams from files that don’t have them (or even those that already do). QPDF also supports a special mode designed to allow you to edit the content of PDF files in a text editor. For more details, please see the documentation links below.

QPDF includes support for merging and splitting PDFs through the ability to copy objects from one PDF file into another and to manipulate the list of pages in a PDF file. The QPDF library also makes it possible for you to create PDF files from scratch. In this mode, you are responsible for supplying all the contents of the file, while the QPDF library takes care off all the syntactical representation of the objects, creation of cross references tables and, if you use them, object streams, encryption, linearization, and other syntactic details.

QPDF is not a PDF content creation library, a PDF viewer, or a program capable of converting PDF into other formats. In particular, QPDF knows nothing about the semantics of PDF content streams. If you are looking for something that can do that, you should look elsewhere. However, once you have a valid PDF file, QPDF can be used to transform that file in ways perhaps your original PDF creation can’t handle. For example, programs generate simple PDF files but can’t password-protect them, web-optimize them, or perform other transformations of that type.”

Source 1: http://qpdf.sourceforge.net/

Source 2: https://github.com/qpdf/qpdf

“Shadertoy is the first application to allow developers all over the globe to push pixels from code to screen using WebGL since 2009.

This website is the natural evolution of that original idea. On one hand, it has been rebuilt in order to provide the computer graphics developers and hobbyists with a great platform to prototype, experiment, teach, learn, inspire and share their creations with the community. On the other, the expressiveness of the shaders has arisen by allowing different types of inputs such as video, webcam or sound.”

Source 1: https://www.shadertoy.com

I am a frequent podcast live-stream listener. And being that I am enjoying the awesome service called xenim streaming network.

Any Podcast producer can join the xsn and with that can live-stream his own Podcast while recording. It’s CDN is based on voluntarily provided resources and pretty rock-solid as far as my experience with it goes.

Since I am a frequent user of this – and I’ve got that gorgeous SONOS hardware scattered around my house – I thought I need to have that service integrated into my SONOS set.

The SONOS system knows the concept of “Music Services”. There are quite a lot of them but xsn is missing. But SONOS is awesome and they got an API!

Unfortunately the API documentation is hidden behind a NDA wall so that would be a no-go. What’s not hidden is what the SONOS controllers have to discuss with all the existing services. Most of the time these do not use HTTPS so we’re free to listen to the chatters. I did just that and was able, for the sake of interoperability, to reverse engineer the SONOS SMAPI as far as it is necessary to make my little xsn Music Service work.

As usual you can get the source-code distributed freely through Github. If you’re not into that sort of compiling and programming things, you are invited to use my free-of-charge provided service. To set it up on your home SONOS just follow these simple steps:

Step 1: Start your SONOS Controller Application and find out the IP address of your SONOS.

Click on “About My Sonos System” and check the IP address written next to the “Associated ZP”.

Step 2: Add the xsn Music Service.

By opening a browser window and browsing to: http://<your-associated-zp-ip>:1400/customsd.htm

When you’re there – fill out the fields as below. The SID is either 255, or if you used that previously, something between 240-253. The service name is “xenim streaming network”. The Endpoint URL and Secure Endpoint URL both are http://xsn.schrankmonster.de/xsn

Set the Polling interval to 30 seconds. Click on the Anonymous Authentication SOAP header policy and you’re good to go. Click on “send” to finish.

Step 3: Add the new Music Service to your SONOS Controller.

Click on “Add Music Services” and click through until you see “xenim streaming network”. Add the service and you’re set!

p.s.: It’s normal that the service icon is a question mark.

Step 4: Enjoy Live Podcasts!

Source 1: https://github.com/bietiekay/sonos-xsn-service

Source 2: http://streams.xenim.org/

“Knight Rider ist wieder da! Nach Jahren des bangen Wartens zeigt RTL endlich wieder den jungen David Hasselhoff, der mit seinem “Wunderauto” auf große Verbrecherjagd geht. Knight Rider war Kult, Knight Rider ist Kult, und Knight Rider mit Bier ist Oberkult! Angeklebte Armaturen, deren Knöpfe beinahe beliebig in Farbe, Beschriftung und Anordnung variieren, während der Fahrt wechselnde Lenkräder, Stuntfahrer mit krasskranken Clownsfrisuren sowie viele, viele, WIRKLICH viele Logik-, Dreh-, oder sonstwie geartete Fehler laden zur spaßig alkoholgetränkten Analyse ein. Die dümmsten Drehpannen, die peinlichsten Hasselhoff-Anmachen, die unauffälligsten Tarnsack-Autofahrer.”

Source: http://www.regelt.org/knightrider/sprittforfun/knightrider.html

I am using an external podcast download tool to stay updated on all podcasts I subscribed to. For this purpose SubSonic is a good choice – actually for a lot more also.

One of the quirks of the SONOS products is that Podcasts are not really well supported. In fact there is no support at all.

So I wrote a tool that extends the SONOS players with the functionality to “remember” play positions within audiobooks and podcasts. Now what’s left to properly have podcasts supported is a view of the most recently updated podcasts. Wouldn’t it be nice to have a “Folder View” in the SONOS controller of what’s new across all the different podcasts you are subscribed to?

Now here’s the trick:

Use a small script on any RaspberryPi in the house to dynamically create hardlinks to the podcasts files in a “Recently Updated Podcasts” folder.

The script is something like this:

find /where-your-podcasts-are/ -type f -printf ‘%TY-%Tm-%Td %TT %p\n’ | sort | tail -n 25 | cut -c 32- | sed -e “s/^/ln \”/” -e “s/$/\”/” -e “s/$/ \”\/recentPodcasts\/\”/” | sh

This short line will go through all folders and subfolders in /where-your-podcasts-are/ and then create Hardlinks in /recentPodcasts to the most recent 25 files.

That way, and when /recentPodcasts/ is made accessible to your SONOS controllers, you’ll have something like this:

Source 1: http://www.subsonic.org

Source 2: play position bookmarker

Airplay allows you to conveniently play music and videos over the air from your iOS or Mac OS X devices on remote speakers.

Since we just recently “migrated” almost all audio equipment in the house to SONOS multi-room audio we were missing a bit the convenience of just pushing a button on the iPad or iPhones to stream audio from those devices inside the household.

To retrofit the Airplay functionality there are two options I know of:

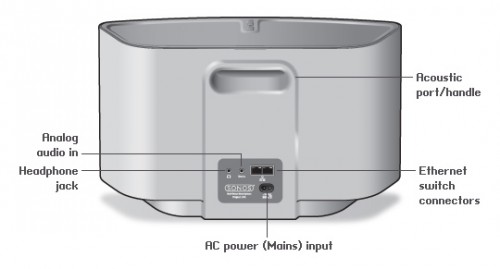

1: Get Airplay compatible hardware and connect it to a SONOS Input.

You have to get Airplay hardware (like the Airport Express/Extreme,…) and attach it physically to one of the inputs of your SONOS Set-Up. Typically you will need a SONOS Play:5 which has an analog input jack.

You have to get Airplay hardware (like the Airport Express/Extreme,…) and attach it physically to one of the inputs of your SONOS Set-Up. Typically you will need a SONOS Play:5 which has an analog input jack.

2: Set-Up a RaspberryPi with NodeJS + AirSonos as a software-only solution

You will need a stock RaspberryPi online in your home network. Of course this can run on virtually any other device or hardware that can run NodeJS. For the Pi setting it up is a fairly straight-forward process:

You start with a vanilla Raspbian Image. Update everything with:

sudo apt-get update

sudo apt-get upgrade

Then install NodeJS according to this short tutorial. To set-up the AirSonos software you will need to install additional avahi software. Especially this was needed for my install:

sudo apt-get install git-all libavahi-compat-libdnssd-dev

You then need to get the AirSonos software:

sudo npm install airsonos -g

After some minutes of wait time and hard work by the Pi you will be able to start AirSonos.

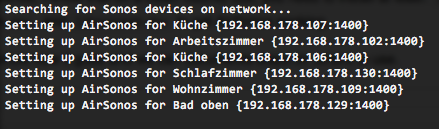

sudo airsonos

And it’ll come up with an enumeration of all active rooms.

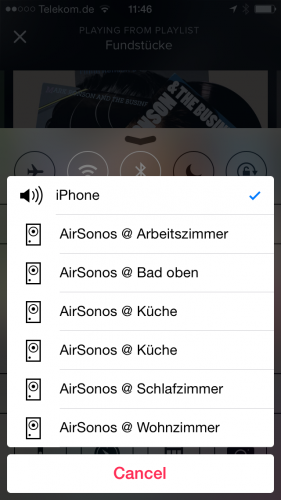

And on all your devices it’ll show up like this:

and

We’re living near a very nice city called Bamberg. And after a long time there are new Webcams availabel for anyone to look at. Even a 360 degree panoramic view!

Source 1: https://www.stadt.bamberg.de/

Source 2: http://www.bnv-bamberg.de/index.php/hotline/71-die-neuen-webcams

Having live streams of common german news radio handy when in other countries is essential. Here’s a good offer (not made by the radios :-))

Source 1: http://www.drad.io/

Quite an interesting read of things to have in mind when doing, you know… life :-)

“Make time to pursue your passion, no matter how busy you are.“

Source 1: http://zenhabits.net/20-things-i-wish-i-had-known-when-starting-out-in-life/

Am kommenden Freitag soll das Space Shuttle Endeavour zum letzen Mal und ein Space Shuttle zum vorletzten Mal abheben. Da will man dabei sein :-)

Ich habe glücklicherweise gerade die Herren (und Damen?) von SpaceLiveCast entdeckt. Offenbar machen die schon eine ganze Weile Livestreams zu den verschiedenen Raumfahrt-Events.

P.S.: Wenn ich einen Wunsch frei hätte, wäre das, dass die Seite einen Video Podcast Feed anbietet….(wird Hilfe benötigt?)

Source 1: http://spacelivecast.de/

Source 2: http://www.raumfahrer.net

Source 3: http://spacelivecast.de/2011/04/29-04-ab-1900-uhr-sts-134-letzter-endeavour-flug/

It’s been some months years since the once Microsoft Research Project got public and Microsoft started offering it’s great Photosynth service to the public.

I’ve been using the Microsoft panoramic and Photosynth tools for years now and I tend to say that they are the best tools one can get to create fast, easy and high-quality panoramic images.

There is photosynth.net to store all those panoramic pictures like this one from 2008:

The photosynth technology itself contains several other interesting technologies like SeaDragon which allows high quality image zooming on current internet connection speeds.

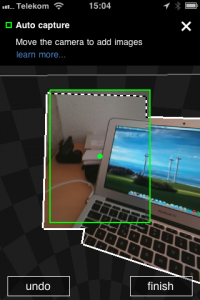

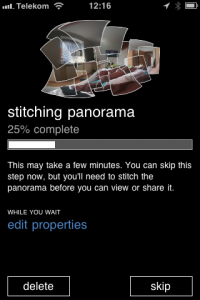

This awesome technology is as of now available on the iPhone (3GS and upwards) and it’s better than all the other panoramic tools I’ve used on a phone.

Source 1: Photosynth articles from the past

Source 2: Photosynth in Wikipedia

Source 3: Photosynth on iPhone App Store

Low Latency Network Audio was a dream for the past years (see an article of 2005 and 2008) and with AirPlay it’s finally there.

I am using the Apple AirPlay technology for several years now… after it got implemented into iOS it’s just fantastic to have the option to have whatever sound source I want to playing loud and clear in any room I want to…

Okay it’s not quite as sophisticated as the sonos solution regarding the control of multiple music sources in multiple rooms but it get’s the job done in an apartment.

So back to the topic: Apple integrated the AirPlay technology into their wireless base station “AirPort Express”. Basically AirPlay is a piece of software which receives an encrypted audio stream over the network and outputs the stream to the SPDIF or audio jack.

Back in 2005 there already was an emulator of this protocol called “Fairport” but Apple decided to encrypt the AirPlay traffic. This led to the problem that the encryption key was unkown because it’s baked into the AirPort Express firmware. And this is where the good news start:

“My girlfriend moved house, and her Airport Express no longer made it with her wireless access point. I figured it’d be easy to find an ApEx emulator – there are several open source apps out there to play to them. However, I was disappointed to find that Apple used a public-key crypto scheme, and there’s a private key hiding inside the ApEx. So I took it apart (I still have scars from opening the glued case!), dumped the ROM, and reverse engineered the keys out of it.”

So to keep things short: Someone got an AirPort Express, dumped the firmware, extracted the AirPlay encryption keys and wrote an emulator of the AirPlay protocol which uses the key. Voilá!

ShairPort is available in source code on the site of the guy and obviously it’s unsure if Apple will react by changing the encryption key in the future. But for the time being it works as advertised:

I took one of my computers and followed the instructions to update perl, install Macports and then run ShairPort. So when ShairPort is run it looks not as appealing as expected:

Notably it uses IPv6 to communicate between iTunes and ShairPort… Oh I almost forgot to show how it looks in iTunes:

On another side note: It works on Linux, Windows and Mac OS X :-)

Source 1: Apple AirPlay

Source 2: Sonos

Source 3: Apple AirPort Express

Source 4: ShairPort