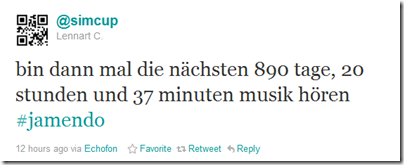

Yesterday @simcup wrote on twitter about that he is currently downloading the whole Jamendo catalog of Creative Commons music.

Although I already knew Jamendo it never occurred to be to download their whole catalog. Since I am a fan of choice I immediately thought about how I could download the catalog too. Since the only clue was a cryptic uri-like text how to achieve that it suddenly sounded like a great idea to write a universal tool and release it as open-source. This tool should allow users to download the whole catalog and keep their local jamendo mirror in sync with the server. So anytime new artists, albums or tracks are added the user does not need to download them all again.

So the only thing I had as a starting point was that cryptic uri pointing me to something I’ve never heard of called Rythmbox. Turns out that this is a GNOME music player application which has Jamendo integration. After some clueless poking around I decided to take a look at the source of Rythmbox, especially the Jamendo module.

This module is written in python and quite clean to read. And just by looking at the first lines I came across the interesting fact that there is a almost daily updated XML dump of the Jamendo catalog available from Jamendo. Hurray! Since Jamendo wants developers to interact with the platform they decided to put a documentation online which allows anyone to write tools and stream and download tracks. After all the clues I found I finally ended up on this page.

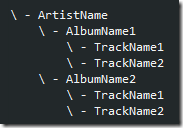

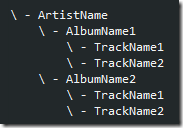

So there are the catalog download, track stream and torrent uris necessary to download the catalog. Now the only thing that is needed is a tool which parses the XML and creates a nice folder structure for us.

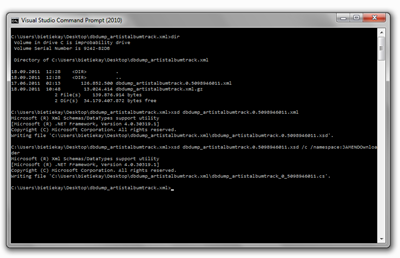

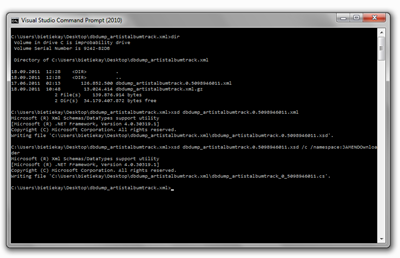

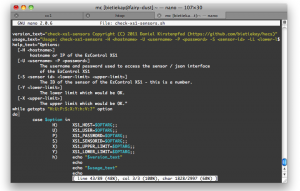

Parsing XML in C# (my prefered programming language) is easy. Basically you can use a tool called XSD.exe and let it generate first the XSD from the XML and then ready-to-use C# classes from that XSD.

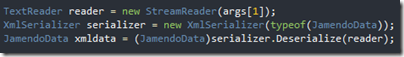

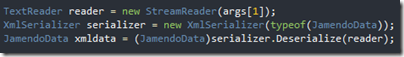

After doing all that actually reading the whole catalog into a useable form breaks down to just three lines of code:

Isn’t it great how modern frameworks take away the complexity of such tasks. At this point I’ve already parsed the whole catalog into my tool and only wrote three lines of code. The rest was generated automatically for me. The best of all – this also works on non-windows operating systems when you use mono.

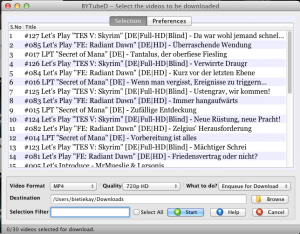

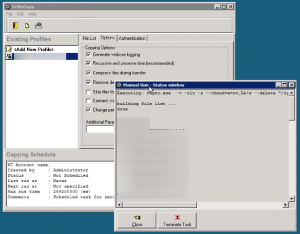

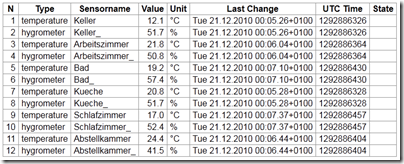

When the XML data is parsed and available in a nice data structure it’s easy to iterate through all artists, all albums and all tracks and then download the actual mp3 or ogg. And that’s basically what my tool does. It takes the XML, parses it, and downloads. It will check before downloading if the track already exists and will only download those added since the last run.

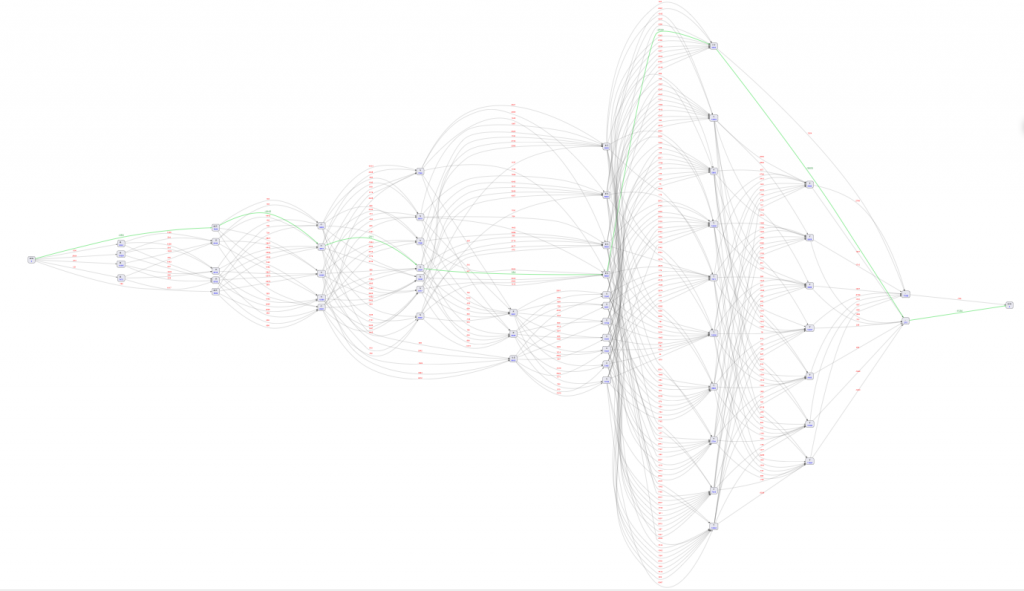

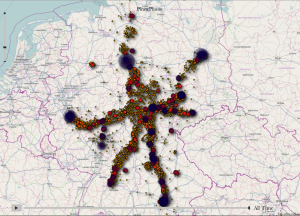

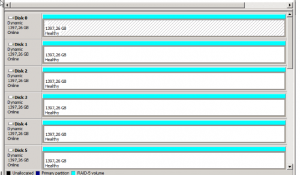

Additionally since I am deeply involved into the development of the GraphDB graph database at sones I want to make use of the Jamendo data and the graph structure it poses. Since the directory structure my tool is generating is only one aspect how you could possibly look at the data it’s quite interesting to demonstrate the capabilities of GraphDB based on that data.

The idea behind the graph representation of the data is that you could start from almost any starting point imaginable. No matter if you you start from a single track and drill up into genre and artists, or if you start at a location and drill down to tracks.

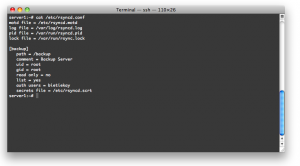

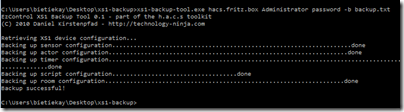

So what the Downloader does in matters of GraphDB integration is that it outputs a GraphQL script which can be imported into an instance of GraphDB.

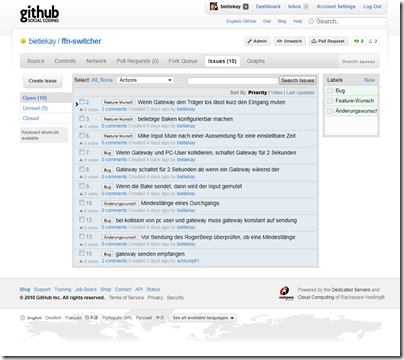

The sourcecode of my tool is available on github and released unter the BSD license – feel free to play with it and to contribute.

Source 1: http://www.jamendo.com

Source 2: https://github.com/bietiekay/JAMENDOwnloader

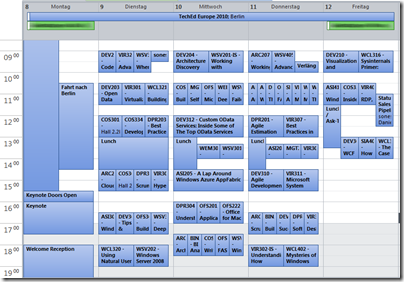

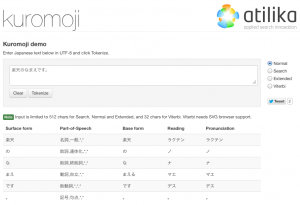

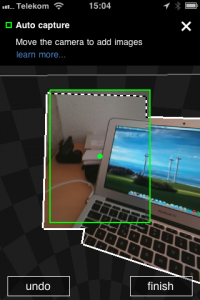

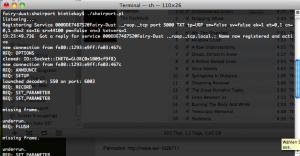

click on it to see it big

click on it to see it big 東京

東京

![Bildschirmfoto-2010-12-13-um-21.24.0[2] Bildschirmfoto-2010-12-13-um-21.24.0[2]](https://www.schrankmonster.de/wp-content/uploads/2010/12/Bildschirmfoto-2010-12-13-um-21.24.02_thumb.png)