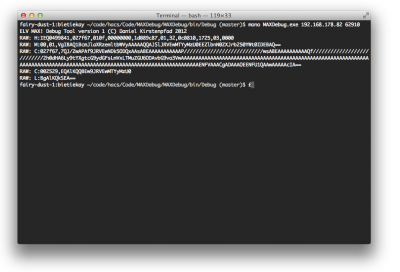

It’s been some weeks since I wrote a status update on the ELV MAX! cube protocol reverse engineering and integration into my own home automation project called h.a.c.s..

So first of all I want to give a short overview over what has been achieved so far:

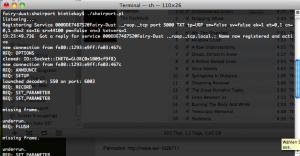

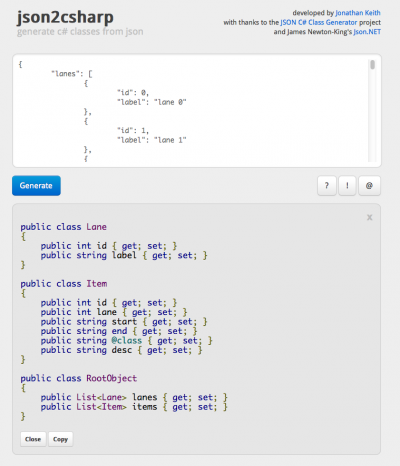

- I wrote a C# library, highly influenced by a PHP implementation from the domotica forum, which allows you to continuesly get status information from the ELV MAX! cube with current (1.3.6) firmware. It is tested so far with a fairly big set-up for the ELV MAX! cube (see below)

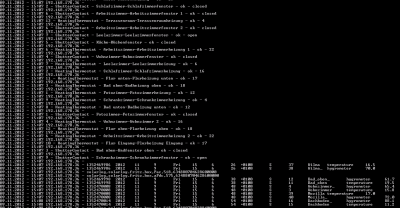

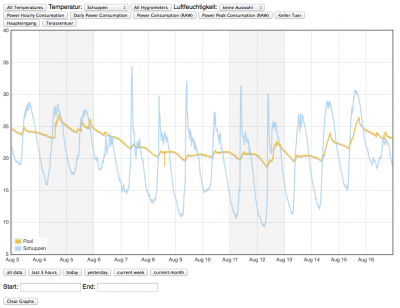

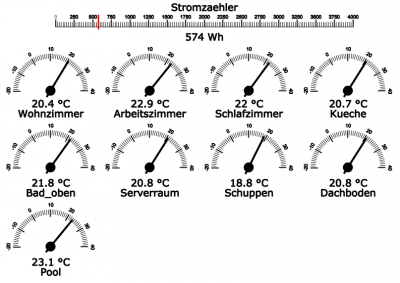

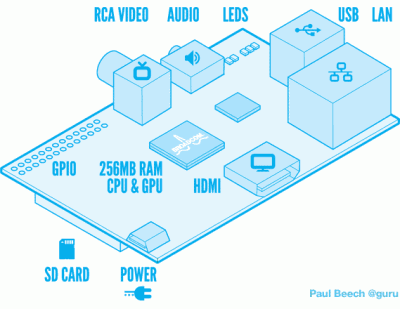

- I was able to integrate that library into my own home automation project called h.a.c.s. – There the ELV MAX! cube is just another device, alongside a EzControl XS1 and a SolarLog 500. The cube is monitored using my library and diff-sets as well as status information are stored automatically with the h.a.c.s. built-in mechanisms. In fact you can access for example the window shutter contact information just like you would with any other door contact in the EzControl XS1.

- You can use events coming from the ELV MAX! cube to create new events – how about switching off/on devices when opening/closing windows?

- Every bit of information from all integrated sensor monitoring and actor handling devices come together in h.a.c.s.

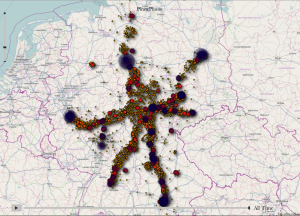

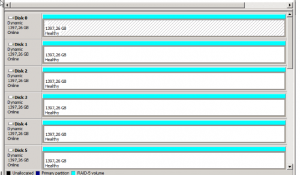

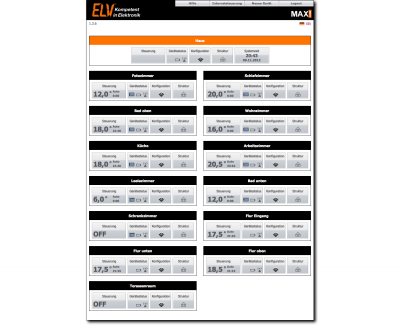

I started the reverse engineering with just one shutter contact and one thermostat. After all my test were successful I went for the big package and ordered some more sensors. This is how the setup is currently configured:

I’ve learned a lot of interesting things about the ELV MAX! cube hardware and software. One is that you need to be ready for surprised. The documentation of the cube tells you the following:

Did you spot the funny fact? 50 devices – we’re well below that limit. 10 rooms – holy big mansion batman! We’re well over that. How is that possible? Well take it as a fact – you can create more than 10 rooms. And that is very handy. I’ve created 13 rooms and there are probably more to come because those shutter contacts are quite cheap and can be used for various other home automation sensory games. The tool to set-up and pair those sensors just came up with a notice that said “Oh well, you want to create more than 10 rooms? If you’re sure that you want that we allow you to, but hey, don’t blame us!”. Cool move ELV! – As of now I haven’t found any downside of having more than 10 rooms.

All my efforts started with firmware version 1.3.5. This firmware seemed to have some severe memory leaks – because just by retrieving the current configuration information every 10 seconds the device would stop communicating after more then 48 hours. Only a reboot could revive it – sometimes amnesia set in which led to a house roundtrip for me.

With some changes in the library (like keeping the connection open as long as possible) and a new firmware version 1.3.6. the cube was way more cooperative and hasn’t crashed for about 1 month now (with 10 seconds update times).

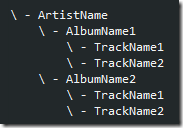

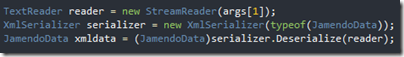

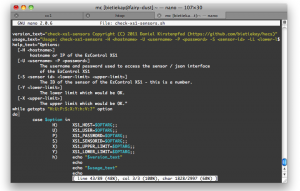

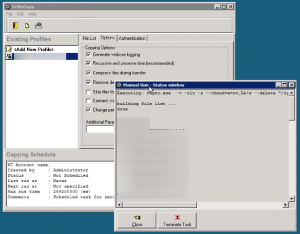

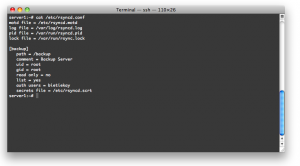

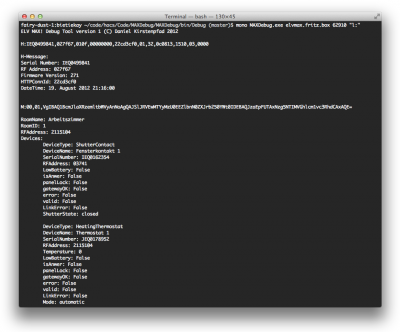

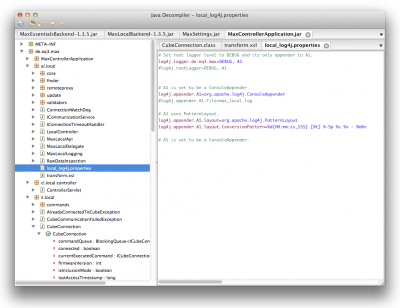

So what does my library do? It is designed to run in it’s own thread. When it’s started it opens a connection to the cube and retrieves the current status and configuration information. Those informations are stored in an object called “House”. This house consists of multiple rooms – and those rooms are filled with window shutter contacts and thermostats. All information related to those different intances are stored along with them. The integration into h.a.c.s. allows the library to generate sensor and actor events (like when a temperature changes, a window opens/closes) which are passed back to h.a.c.s. and handled in the big event loop there.

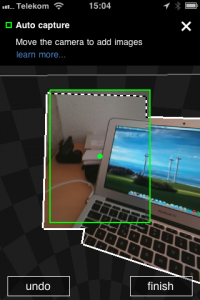

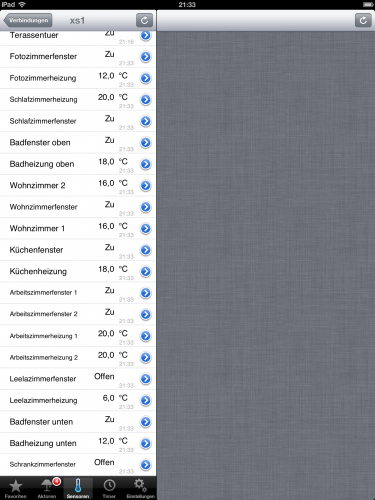

With all that ELV MAX! cube data I wanted to plug a quite nice tool that I am using in the iPhone and the iPad. It’s called “Moni4home” and it allows you to control the EzControl XS1 directly. Because it’s only accessing the EzControl XS1 I used h.a.c.s. to “inject” additional sensor data into the standard EzControl XS1 data. So basically data flow is like this: iPad app accesses h.a.c.s. which acts as a proxy. h.a.c.s. retrieves the EzControl XS1 sensor and actor data and injects additional virtual sensors like those from the ELV MAX! cube. h.a.c.s. then sends that beefed up data to the iPad app. Voilá!

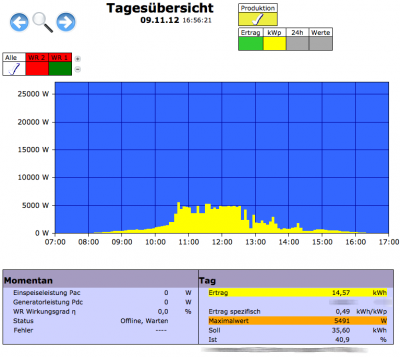

After the successful integration of the ELV MAX! cube I’ve started to work on the next bit of networking home automation equipment in my house – a solar panel data logger called “Solar-Log 500”. This device monitors two solar power inverters and stores the sensory data.

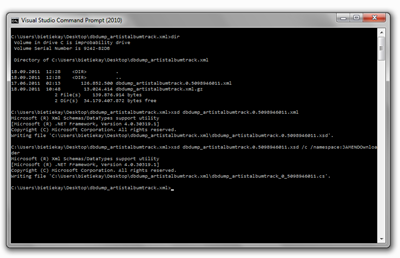

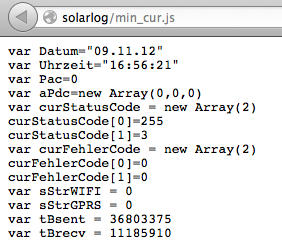

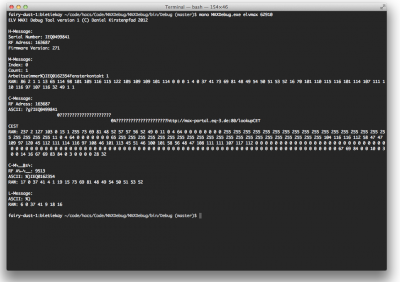

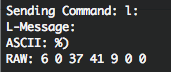

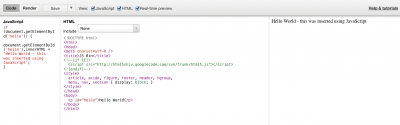

“Funny” story first: this device has the same problem like the ELV MAX! cube. When you start to poll it every 10 seconds (or less) it just stops operating after about 20 hours. Bear in mind: In case of the Solar-Log I just http-get a page that looks like this in the browser:

And by doing so every 10 seconds the device stops working. I am using the current firmware – so one workaround for that issue is to planable reboot the Solar-Log at a time when there is no sun and therefore nothing to log or monitor.

Beside that it’s a fairly easy process: Get that information, log it. Done.

So there you have it – h.a.c.s. interfacing with three different devices and roughly 100 sensors and actors over 434mhz/868mhz, wireless and wired network. There’s still more to come!

A lot of people seem to dive into home automation these days. Apparently Andreas is also at the point of starting his own home automation project. Good to know that he also is using the EzControl XS1 and in the future maybe even the ELV MAX! cube. Party on Andreas!

Source 1: ELV MAX! cube progress

Source 2: Reverse Engineering the ELV MAX! cube protocol

Source 3: ELV MAX! cube – home automation for the heating

Source 4: http://www.solar-log.com/de/produkte-loesungen/solar-log-500/uebersicht.html

Source 5: h.a.c.s. sourcecode

Source 6: http://monitor4home.com/Beschreibung.html

Source 7: http://www.aheil.de/2012/11/06/hack-the-planet-architectural-draft/

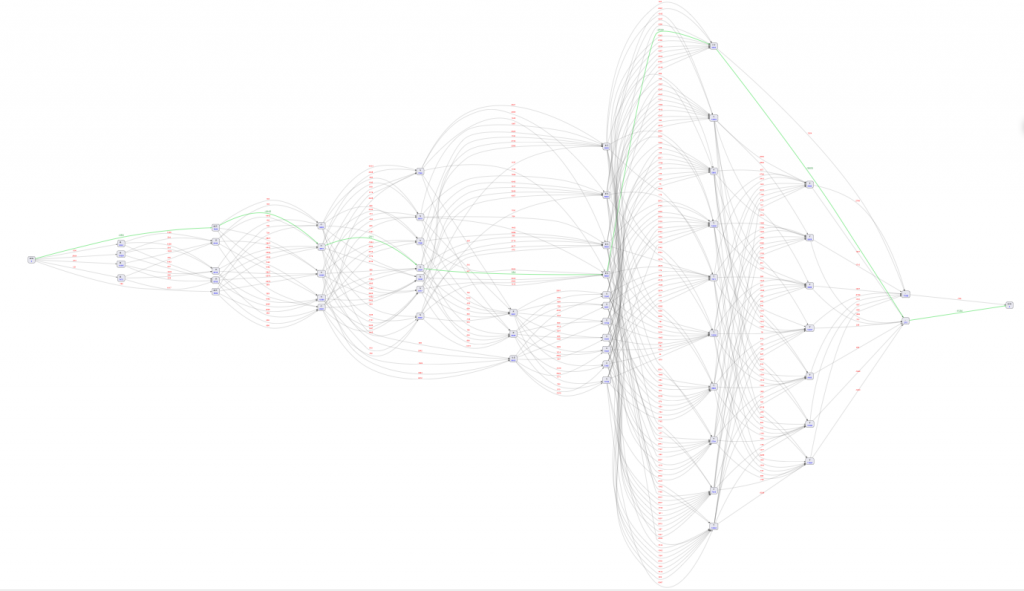

click on it to see it big

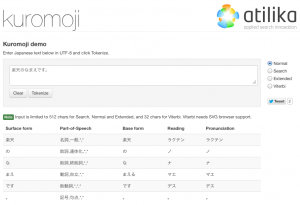

click on it to see it big 東京

東京