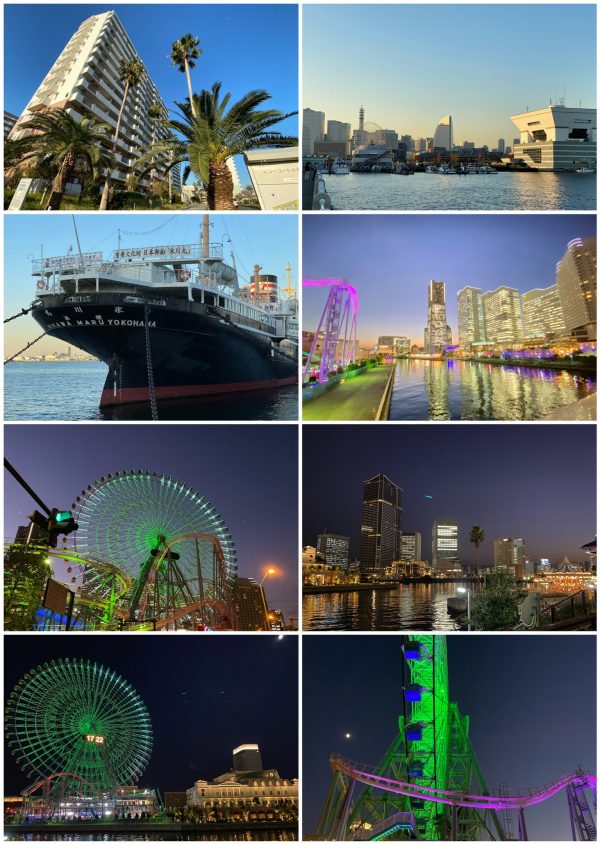

Japan November 2019: Day 4

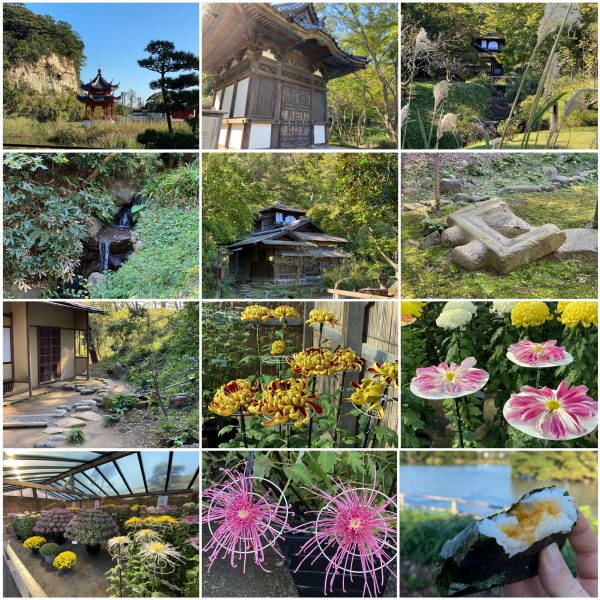

Japan November 2019: Day 3

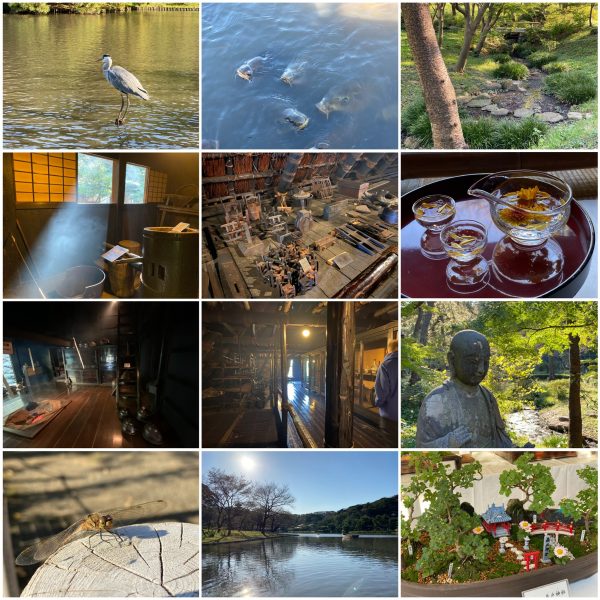

Japan November 2019: Day 2

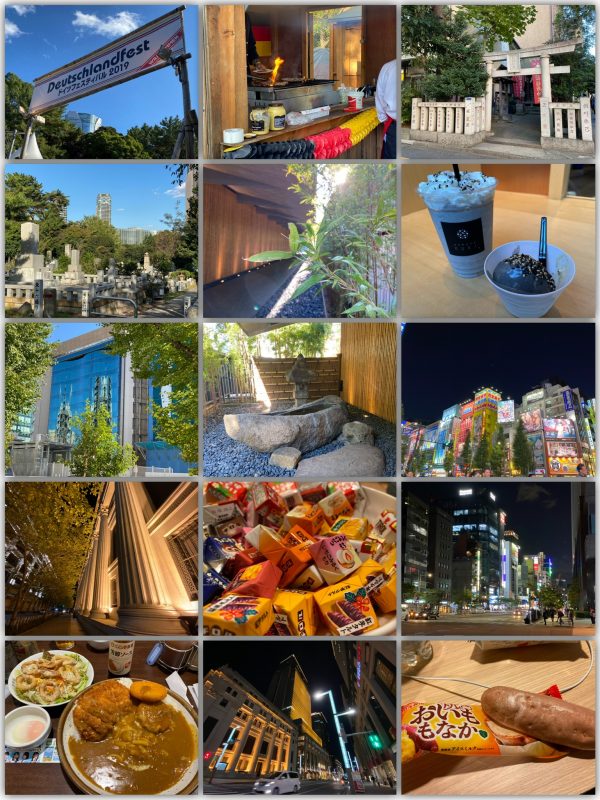

Japan November 2019: Day 1

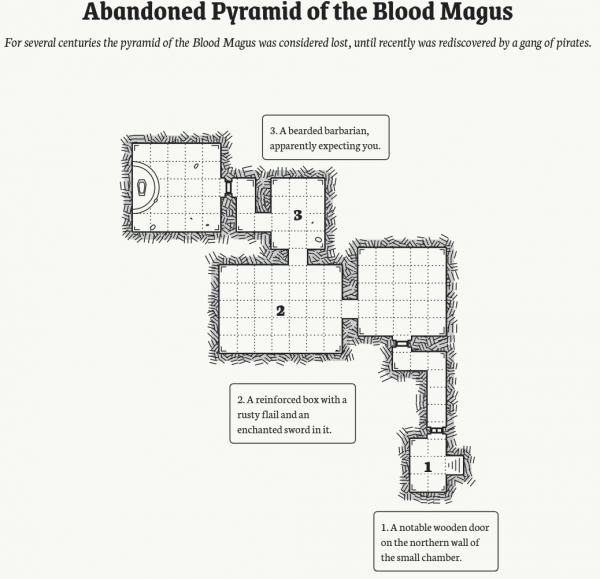

procedural dungeon map generator

There are lots of inputs into the “procedural generation contest” – yet this one, as yesterdays, is worth a mention:

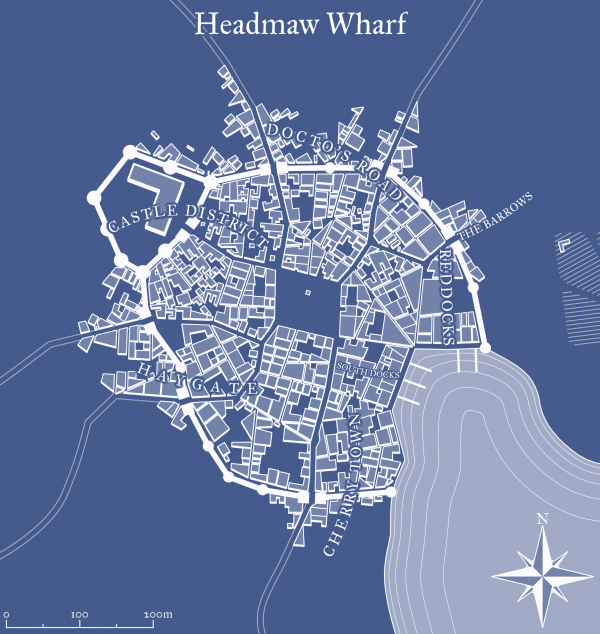

procedurally generated cities

This application generates a random medieval city layout of a requested size. The generation method is rather arbitrary, the goal is to produce a nice looking map, not an accurate model of a city. Maybe in the future I’ll use its code as a basis for some game or maybe not.

Medieval Fantasy City Generator

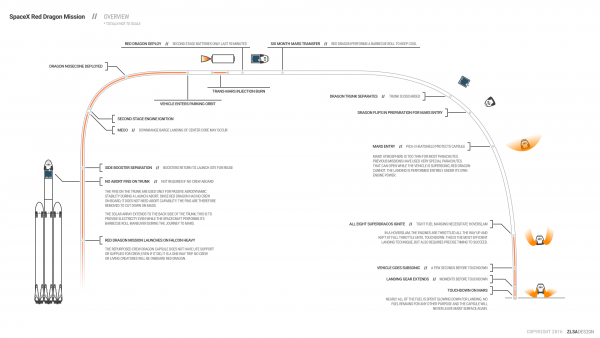

Spaceflight Infographics

A great source of SpaceX related infographics is this page here.

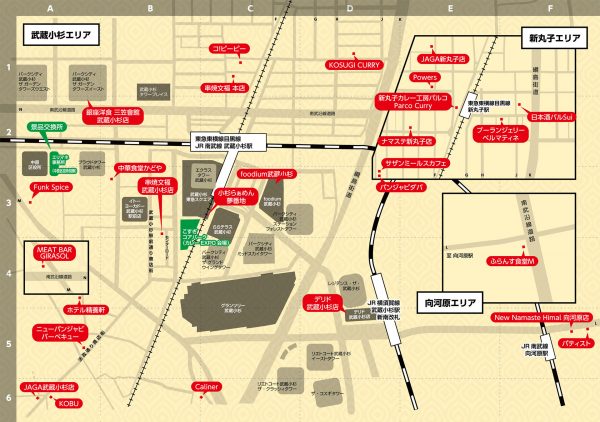

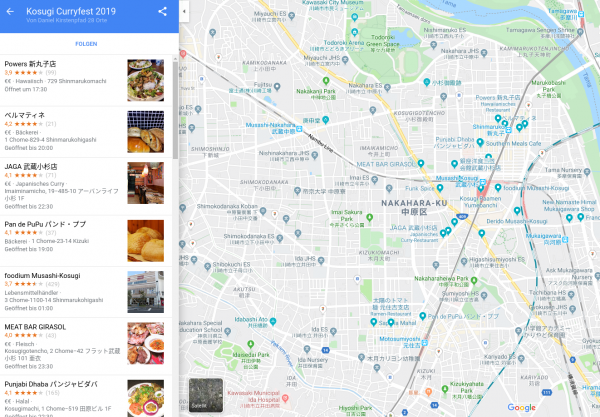

Musashikosugi Curry Fest

If you do not know japanese curry yet you are missing out big time.

Unfortunately due to typhoon 19 the Musashikosugi Curry Expo had been cancelled along the overall Kosugi Festival 2019.

But the curry stamp rally did start earlier than the typhoon hit the city and carries on still until end of october.

It works like this:

You go to each restaurant. You eat a meal. You get a stamp.

The more stamps you collect the higher valued the prices. More meal coupons even electronics!

But anyway. It’s all about japanese and indian curry. And for that

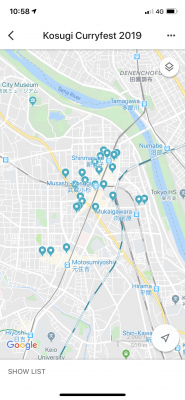

As you too might want to tick all 28 restaurants of your bucket list take this map I made of all 28:

As you can see – on the iPhone Google Maps app you even get a nice progress bar of those you already visited. I’ve been to parco curry already – so that counted.

ASCII browser games

A lot is going on in browsers these days. They are becoming increasingly powerful and resource-demanding.

So it just feels natural to combine high resource usage infrastructure with low resource using graphics to get the worst of both worlds.

Not quite, but you get the idea.

There’s a guy on the internet (haha) who dedicates time to write ASCII / character based graphics engines and games with it.

Meet MrGumix:

Of course, what’s that games and graphics?

Exhibit #1:

And the more advanced Exhibit #2:

more blacker

A month ago I wrote about a very black paint. This month brings me a papepr about an even blacker substance.

The synergistically incorporated CNT–metal hierarchical architectures offer record-high broadband optical absorption with excellent electrical and structural properties as well as industrial-scale producibility.

Paper: Breakdown of Native Oxide Enables Multifunctional, Free-Form Carbon Nanotube–Metal Hierarchical Architectures

Convert HEIC to JPEG or PNG

If you own a modern age phone it’s very likely that it will store the photos you take in a wonderful format called HEIC – or “High Efficiency Image File Format (HEIF)”.

Now the issue with this format is that your average toolchain is based upon things like Portable Network Graphics (PNG), JPEG and maybe GIF or Scalable Vevtor Graphics (SVG).

So HEIC does not quite fit yet. But you can make it fit with this on Linux.

Imagemagick and current GIMP installations apparently still don’t come pre-compiled with HEIF support. But you can install a tool to easily convert an HEIC image into a JPG file on the command line.

apt install libheif-examplesand then the tool heif-convert is your friend.

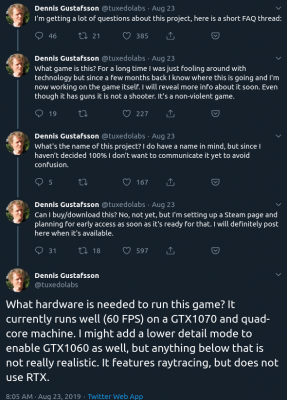

Raytraced Voxel Physics

The amount of computing power available in todays hardware is reaching levels where raytracing and high detail physics simulations are in reach.

Dennis Gustafsson shared on his Twitter and blog some insights and videos of his experiments and implementations. Be astonished:

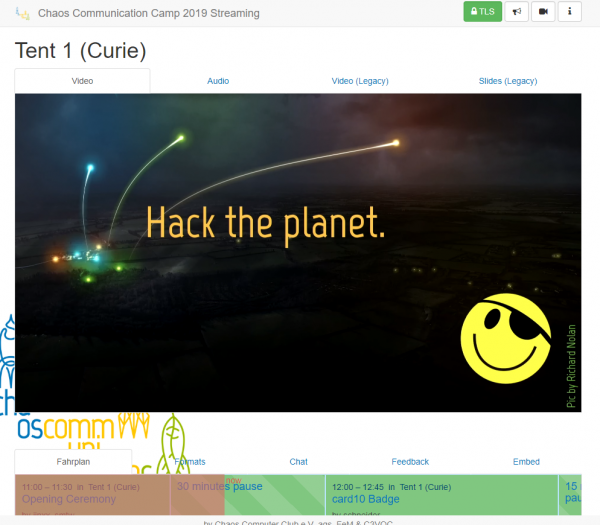

CCCamp is upon us!

The CCCamp 2019 has just started and you can join the live streaming anytime.

グランツリー武蔵小杉 and Park City Forest Towers in daylight

electronic fireworks

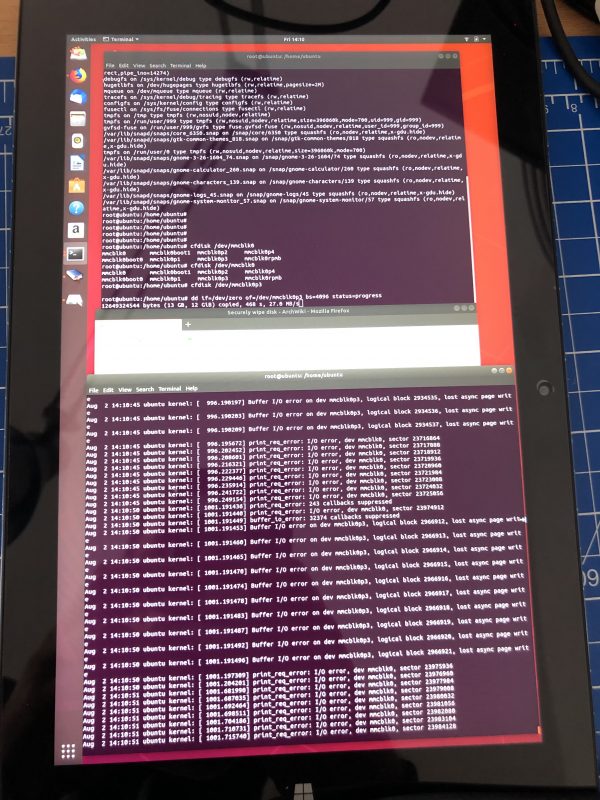

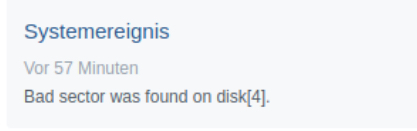

The firecracker exploded. Apparently after 2 weeks of usage of the Chuwi Hi10 Air the eMMC flash is malfunctioning.

In a totally strange way: Every byte on the eMMC can be read, seemingly. Even Windows 10 boots. But after a while it will hang and blue screen. Apparently because it tries to write to the eMMC and when those writes fail and pile up in the caches at some point the system calls it quits.

Anyhow: It means that no byte that is right now on this eMMC can be deleted / overwritten but only be read.

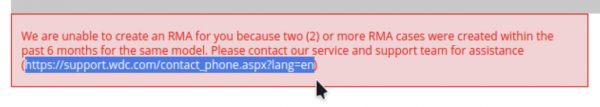

The great chinese support is really helpful and offered to replace the device free of charge right away. That’s very nice! But I came to the conclusion that I cannot send the device in, because:

It contains a full set of synched private data that I cannot remove by all means because the freaking soldered-on eMMC flash is broken.

The recipient of this broken tablet in china would be able to read all my data and I could not do anything about it.

Only an extremely small fraction of data is on there unencrypted. Only that much I hadn’t yet switched on encryption on during the initial set-up I was still doing on the device. And that little piece of data already is what won’t let me send out the device.

Now, what can we learn from this? We can learn: Never ever ever work with anything, even during set-up, without full encryption.

can’t stop being amazed by this display

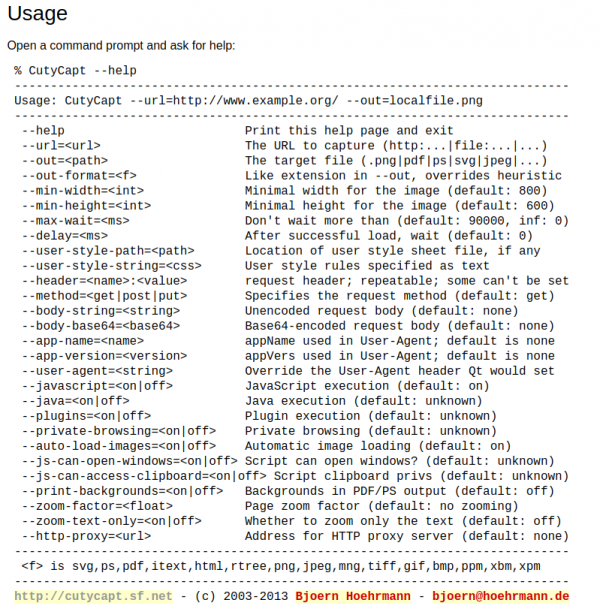

full website screenshots from your commandline

Think of this: You want to capture a whole, multi-scroll-pages, web-page into one image.

This can be difficult without the right tools. It surely will be a lot of work to accomplish a 10th of thousand pixel height screenshot put together from multiple single screenshots…

CutyCapt is there to help! It’s a command line tool encapsulating the very powerful WebKit browser engine to render a full page and then create a single file screenshot of the whole page for you.

By example, this is what it did when told to screenshot this website:

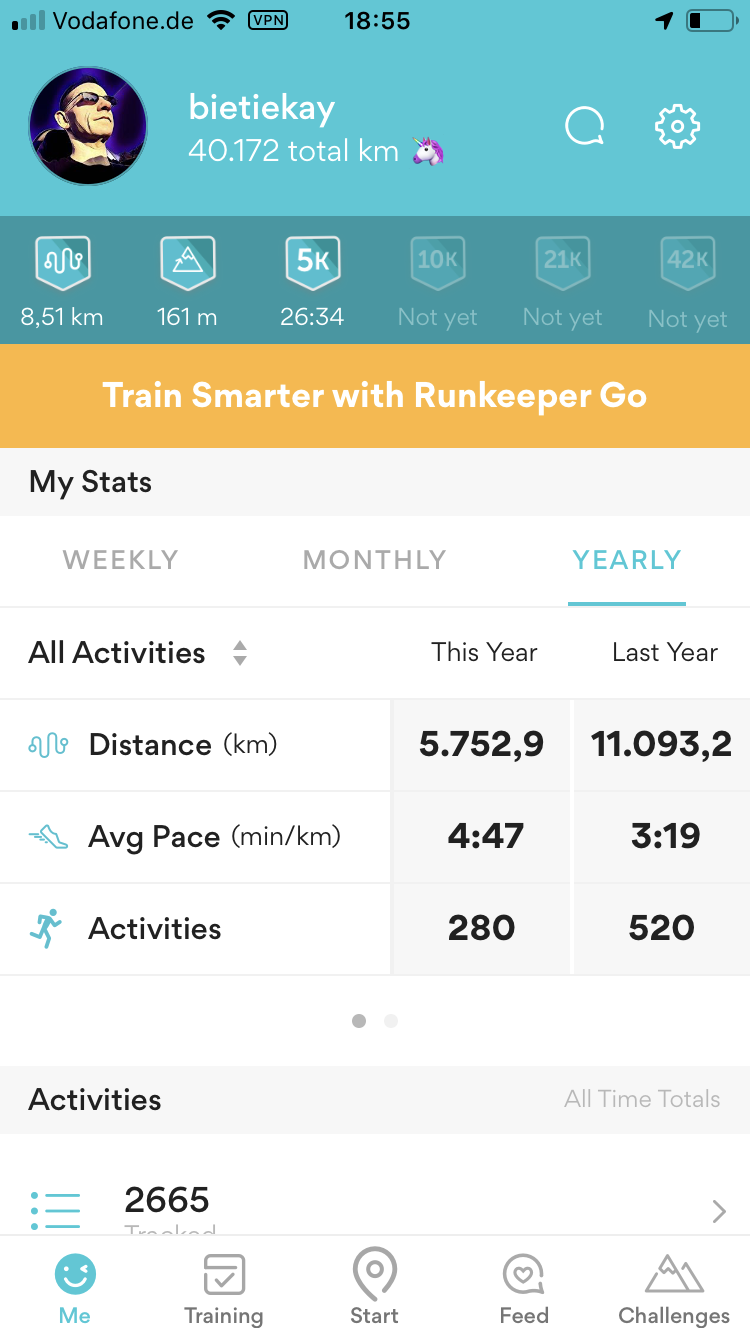

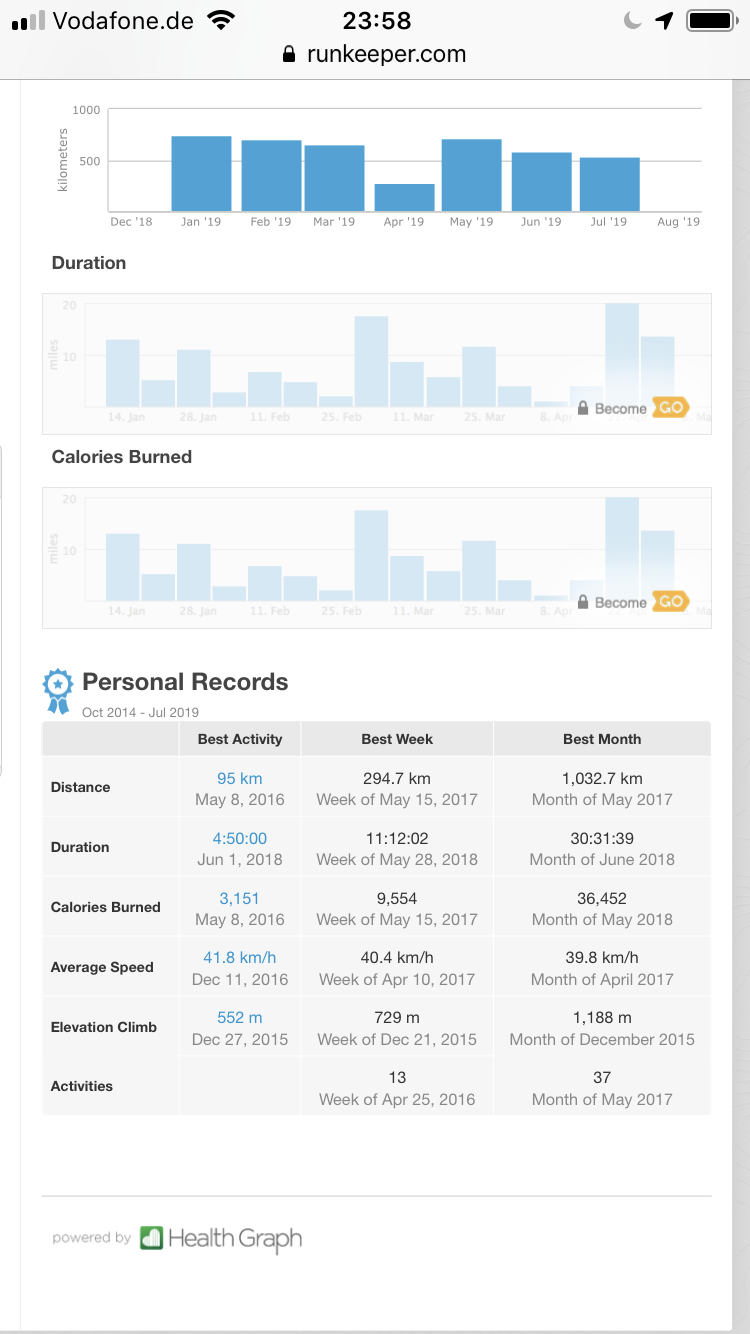

4 years of sports

Addition: Of course the app I am using is called runkeeper yet I am mostly doing cycling. On average I am actually doing about 30km every day right before work starts. Here’s an additional statistic just for cycling:

PixelFed – Federated Image Sharing

In search of alternatives to the traditional centralized hosted social networks a lot of smart people have started to put time and thought into what is called “the-federation”.

The Federation refers to a global social network composed of nodes that talk to each other. Each of them is an installation of software which supports one of the federated social web protocols.

What is The Federation?

You may already have heard about projects like Mastodon, Diaspora*, Matrix (Synapse) and others.

The PixelFed project seems to gain some traction as apparently the first documentation and sources are made available.

PixelFed is a federated social image sharing platform, similar to instagram. Federation is done using the ActivityPub protocol, which is used by Mastodon, PeerTube, Pleroma, and more. Through ActivityPub PixelFed can share and interact with these platforms, as well as other instances of PixelFed.

the-federation

I am posting this here as to my personal logbook.

Given that there’s already a Dockerfile I will give it a try as soon as possible.

CCCamp 2019 – 21. – 25. August 2019

The Chaos Communication Camp is an international, five-day open-air event for hackers and associated life-forms. It provides a relaxed atmosphere for free exchange of technical, social, and political ideas. The Camp has everything you need: power, internet, food and fun. Bring your tent and participate!

CCCamp 2019 Wiki

It has been 2005 that I had the time and chance to attend an international open-air meeting of normal people. Of course I am talking about the 2005 What-the-hack I wrote about back then.

This year it’s time again for the Chaos Communication Camp in Germany. Sadly still I won’t be attending. Clearly that needs to change with one of the next iterations. With the CCC events becoming highly valuable also for families maybe it’s a chance in the future to meet up with old and valued friends (wink-wink Andreas Heil).

The Chaos Communication Camp (also known as CCCamp) is an international meeting of hackers that takes place every four years, organized by the Chaos Computer Club (CCC). So far all CCCamps have been held near Berlin, Germany.

The camp is an event for providing information about technical and societal issues, such as privacy, freedom of information and data security. Hosted speeches are held in big tents and conducted in English as well as German. Each participant may pitch a tent and connect to a fast internet connection and power.

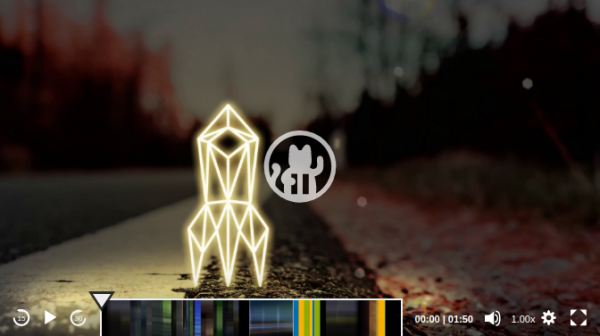

Enjoy the intro-movie that has just been made available to us, alongside the whole design material:

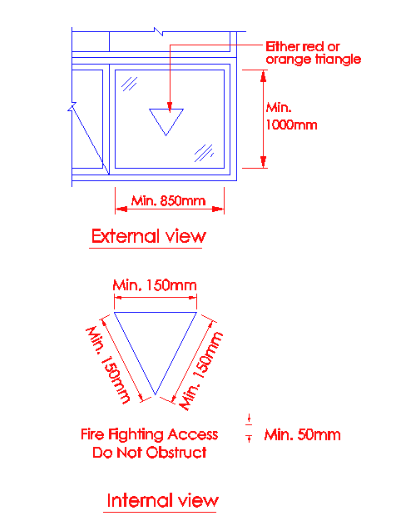

a red triangle on the window

When you walk around in Tokyo you will find that many buildings have red-triangle markings on some of the windows / panels on the outside.

I noticed them as well but I could not think of an explanation. Digging for information brought up this:

Panels to fire access openings shall be indicated with either a red or orange triangle of equal sides (minimum 150mm on each side), which can be upright or inverted, on the external side of the wall and with the wordings “Firefighting Access – Do Not Obstruct” of at least 25mm height on the internal side.

Singapore Firefighting Guide 2018

The red triangles on the buildings/hotel windows in Japan are the rescue paths to be used in case of fire. All fire fighters know the meaning of this red triangle on the windows. Red in color makes it prominent, to be located easily by the fire fighters in case of a fire incident. During a fire incident, windows are generally broken to allow for smoke and other gases to come out of the building.

Triangles in Japan

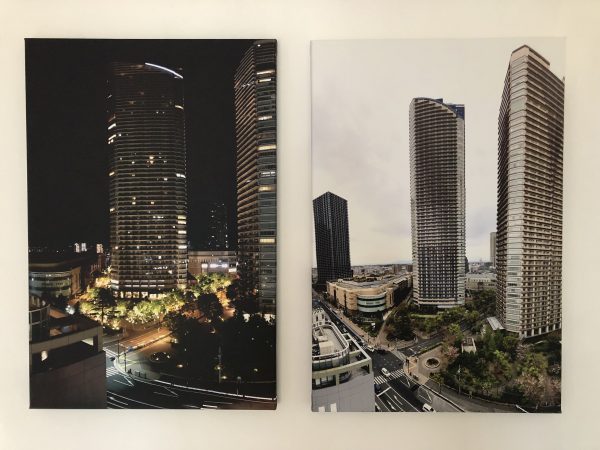

グランツリー武蔵小杉 and Park City Forest Towers on canvas

I’ve blogged about those pictures taken day- and night-time before. I’ve also blogged about how they where produced.

With a picture spot freed at one of the walls in our house we decided to print the day and night pictures on canvas and have them side-by-side. And it looks great, I think.

get your voxels done properly: SpriteStack

SpriteStack is a voxel editor suited for 2D artists featuring hand crafted retro renderer with animation support. It exports spritesheets, slices and vox models.

SpriteStack

Bitmap & tilemap generation with the help of ideas from quantum mechanics

You can get a grasp at the beautiful side of science with visualizations and algorithms that output visual results.

This is the example of producing lots and lots of complex data (houses!) from a small set of input data. It is widely used in game development but also can be helpful to generate parameterized test and simulation environments for machine learning.

So before sending you over to the more detailed explanation the visual example:

This is a lot of different house images. Those are generated using a program called WaveFunctionCollapse:

WFC initializes output bitmap in a completely unobserved state, where each pixel value is in superposition of colors of the input bitmap (so if the input was black & white then the unobserved states are shown in different shades of grey). The coefficients in these superpositions are real numbers, not complex numbers, so it doesn’t do the actual quantum mechanics, but it was inspired by QM. Then the program goes into the observation-propagation cycle:

On each observation step an NxN region is chosen among the unobserved which has the lowest Shannon entropy. This region’s state then collapses into a definite state according to its coefficients and the distribution of NxN patterns in the input.

On each propagation step new information gained from the collapse on the previous step propagates through the output.

On each step the overall entropy decreases and in the end we have a completely observed state, the wave function has collapsed.

It may happen that during propagation all the coefficients for a certain pixel become zero. That means that the algorithm has run into a contradiction and can not continue. The problem of determining whether a certain bitmap allows other nontrivial bitmaps satisfying condition (C1) is NP-hard, so it’s impossible to create a fast solution that always finishes. In practice, however, the algorithm runs into contradictions surprisingly rarely.

Wave Function Collapse algorithm has been implemented in C++, Python, Kotlin, Rust, Julia, Go, Haxe, JavaScript and adapted to Unity. You can download official executables from itch.io or run it in the browser. WFC generates levels in Bad North, Caves of Qud, several smaller games and many prototypes. It led to new research. For more related work, explanations, interactive demos, guides, tutorials and examples see the ports, forks and spinoffs section.

retina blasting

Hain, Bamberg

絵描きさんの作業環境が見たい – I want to see artists’ work environments

We are using computers every day and we are doing this in many different environments. Some of us give their desk space and work environment some more thoughts.

If you want some inspiration regarding your desk and work space, take a look at this great Twitter Hashtag: #絵描きさんの作業環境が見たい

It means “I want to see artists’ work environments” and is used for some years now for japanese artists to post pictures of their work environments…

I also had posted mine, yet not being an artist.

Oh come on…

And to spice things up a bit more:

it’s this time of the year again

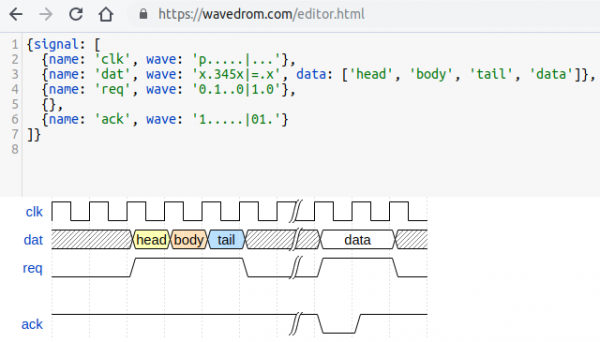

technical visualization tools

There’s so much happening in this field as visualizations become more powerful and easier to create.

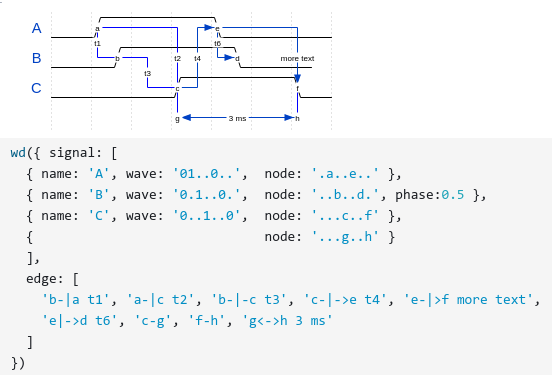

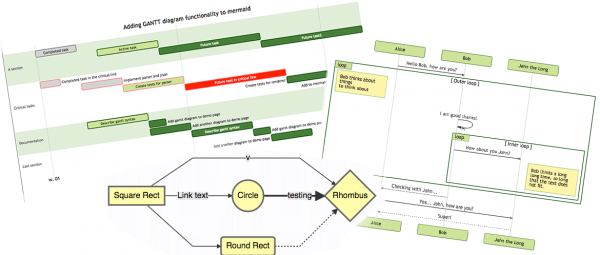

WaveDrom

WaveDrom draws your Timing Diagram or Waveform from simple textual description. It comes with description language, rendering engine and the editor.

WaveDrom editor works in the browser or can be installed on your system. Rendering engine can be embeded into any webpage.

https://wavedrom.com/

MermaidJS

Generation of diagrams and flowcharts from text in a similar manner as markdown.

Ever wanted to simplify documentation and avoid heavy tools like Visio when explaining your code?

This is why mermaid was born, a simple markdown-like script language for generating charts from text via javascript. Try it using our editor.

上野

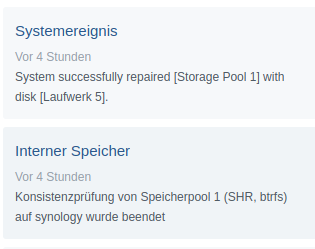

update: storage array synced

All is good. No data has been harmed in the process. Now drive #8 needs to be replaced.